- Home

- The Thinking Wire

- Enterprise MCP Just Crossed the Engineering Line

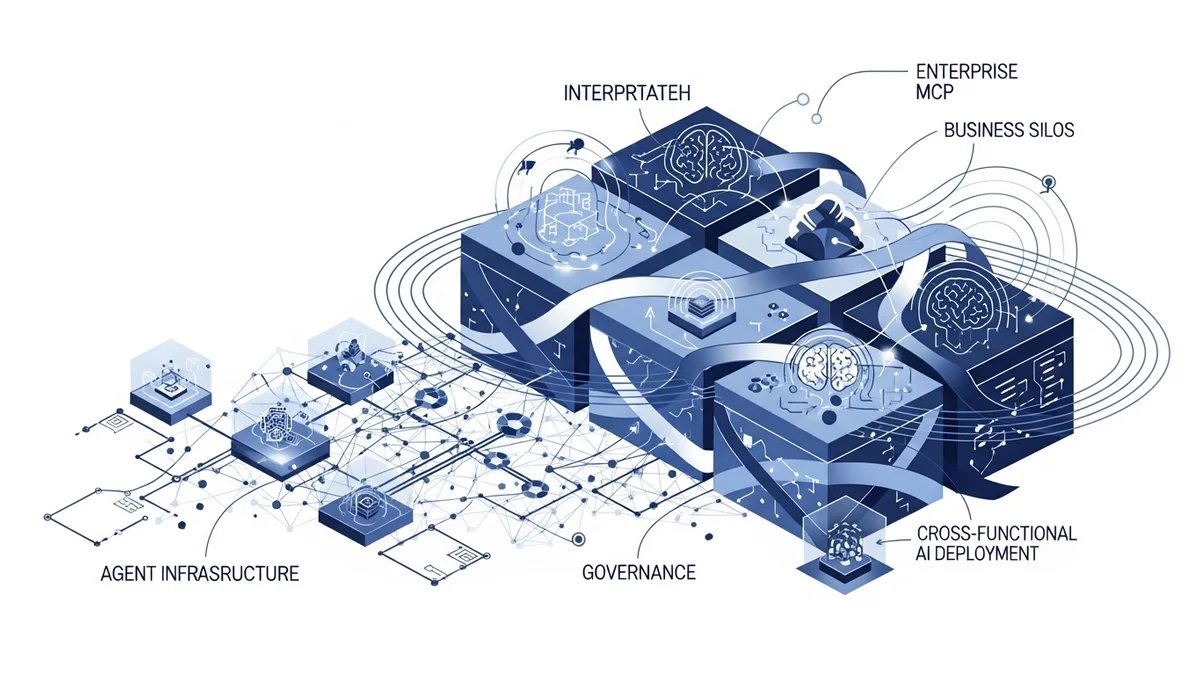

On April 14 2026, Cloudflare published the first public reference architecture describing a Model Context Protocol deployment past the engineering silo. In the same post, it named the teams using it: product, sales, marketing, and finance. It also named the controls wrapped around those teams: Cloudflare Access, MCP server portals, AI Gateway, DLP, and Gateway-based shadow-MCP detection.

This is a moment worth marking. For the past six months we have argued that engineering had Cloudflare and marketing had nothing. That claim is now too strong. The tooling exists. It ships with names. What we still do not have is depth-of-adoption evidence, and the gap between “documented” and “operating” is where enterprise buyers need to push this year.

This essay does three things. It catalogs the five-plane governance taxonomy Cloudflare just put on the table. It does the token-economics math that makes cross-functional MCP affordable. And it names the open questions a serious buyer should bring to any agent-infrastructure vendor, Cloudflare included.

The Five-Plane Taxonomy

Strip Cloudflare’s post to its primitives and you get a control model every enterprise should steal:

| Plane | Primitive | What it controls |

|---|---|---|

| Identity | Cloudflare Access (OAuth, SSO, MFA, device + location attributes) | Who can reach which MCP server |

| Discovery and policy | MCP server portals | Which servers appear in an agent’s catalog per role |

| Model mediation | AI Gateway | Routing, per-employee token limits, logging, provider switching |

| Content | DLP + Gateway HTTP policies | PII redaction and pattern-based egress blocking |

| Shadow traffic | Gateway + DLP scanning | Detection of unsanctioned MCP traffic |

The virtue of this taxonomy is not its novelty. It is that a CIO can now hand it to an audit committee and say: this is the shape of what we bought. That is a different conversation than the one engineering teams were having a year ago about community-written connectors with no auth story.

The limits of the taxonomy are also worth naming. Shadow-MCP scanning looks at the first 1,024 bytes of POST bodies with ten regex patterns, in Rust’s regex flavor, on a 30-day history. That is detection. It is not prevention by default. The tutorial shows you how to promote a detection into a block, but organizations that do not operationalize the tutorial walk away with logs, not a perimeter. Detection is a starting signal. Calling it prevention overstates the control.

A second caveat. Cloudflare’s post describes default-deny write controls at the MCP server template layer, with audit logs capturing capability, duration, and status. That default-deny write model does not appear in the canonical cloudflare-one/access-controls/ai-controls/mcp-portals/ documentation. It lives in the blog post and the template. Treat it as a strong reference implementation, not a platform guarantee.

The Economics That Force the Governance

Cross-functional MCP does not become affordable until the token economics of tool-loading collapse. Cloudflare’s Code Mode primitive, shipped in February and now running at the portal layer, is the load-bearing piece.

Do the math.

The internal portal case: 52 tools spread across connected MCP servers load roughly 9,400 tokens of tool definitions into every agent context. Two portal tools, search and execute, load roughly 600 tokens. That is (9,400 − 600) / 9,400 = 93.6%, which Cloudflare rounds to 94%. More importantly, that cost stays fixed as you connect additional servers.

The Cloudflare API case is sharper. The company’s full API surface is 2,500+ endpoints. Loading every tool definition into an agent costs roughly 1.17 million tokens. Code Mode brings that to roughly 1,000 tokens. (1,170,000 − 1,000) / 1,170,000 = 99.91%, stated as 99.9%.

Why this matters for governance, not just cost. The old trade-off was: every new MCP server you govern makes the agent dumber and more expensive. So teams skip governance, or they skip the server. Code Mode decouples integration count from context cost. You can afford to put finance, marketing, and sales portals behind Access because the agent does not pay a tool-loading tax per portal. The economics that force a governance program now exist. That is a change in kind, not in degree.

One honest note. Code Mode does not buy reasoning quality. It moves the agent’s skill from “pick the right tool from a catalog” to “write the right JavaScript against two primitives.” That is a different failure mode, not a smaller one.

The Operational Trilogy

Enterprise MCP is the identity and policy layer. On its own, it does not tell you how agents actually run, or what happens when they fail. Cloudflare shipped the adjacent pieces on April 15.

Project Think is the durable-execution runtime. Its primitives are Fibers (checkpoint-based survival across restarts), Facets (child Durable Objects with isolated SQLite and typed RPC for sub-agents), persistent sessions backed by SQLite FTS5, and a capability-based sandboxed code execution tier that replaces ambient authority with an explicit permission ladder. The operational claim is zero idle cost: 10,000 agents each active 1% of the time settle at roughly 100 concurrently active via Durable Object hibernation.

Read those numbers with the same skepticism as the 94% and 99.9%. They are first-party architectural claims, not audited customer benchmarks. The “roughly 100x faster and 100x more memory-efficient than a container” line is Cloudflare’s own benchmark with no methodology shared. Directional credibility is high. Quantitative credibility needs independent reproduction.

Humwork closes the other side of the loop. It is a Y Combinator P26 company that launched an agent-to-person marketplace on the same day. It claims 1,000+ verified experts across engineering, design, legal, marketing, strategy, and finance, an 87% resolution rate in beta, 2,858 resolved questions, sub-two-minute first response, and sub-thirty-second MCP handoff with automatic PII redaction.

Every one of those numbers is self-reported beta data, not an audited SLO. A resolution rate is not a resolution SLA. “Verified expert” is a vendor-described process, not a named certification. PII redaction is automatic, but the algorithm and false-negative rate are undisclosed. Humwork proposes a production model for the agent failure loop. It does not yet prove one.

Put the three together and the shape is clearer than any one piece: Access governs who connects, Think runs the work durably and safely, Humwork escalates when the agent is wrong. As we argued in the Cloudflare governance trilogy and the essay on running agents at production scale, the interesting story is never a single primitive. It is the operational loop.

What Buyers Should Demand

We have written elsewhere that infrastructure vendors are shipping governance as table stakes, and that governance-as-product is a real discipline, not a slide in a security deck. The operations discipline gap is still the dominant failure mode. Enterprise MCP does not close it. It raises the floor.

Here is the buyer’s checklist we now use in governance reviews. Bring it to your agent-infrastructure vendor this year:

- Adoption depth, not adoption names. How many employees per non-engineering team? Which specific workflows are running in production versus experimentation? What is the incident count per quarter? A list of team names is not a case study.

- Default-deny writes at the platform layer. Is it documented in the product, or only in a blog post? If it lives in a template, ask for the template to be first-class.

- Audit granularity. Do logs capture tool arguments and outputs, not just the capability name and duration? Can you reconstruct a multi-tool chain of custody? Access auditing is not forensic auditing.

- Budget governance beyond per-employee limits. Per-team quotas, per-workflow budgets, per-data-classification budgets, runaway detection. Finance will ask for all of these.

- DLP intent, not DLP patterns. Regex on the first 1,024 bytes of a POST body is a scanner, not a governance engine. Ask about purpose limitation, data-minimization rules, and model-aware detection of inferred PII.

- Shadow-MCP blocking posture. Is detection wired to a policy that blocks, or does it stop at a dashboard? If the answer is dashboard, you have logs, not controls.

- A2P escalation SLOs. Resolution-rate marketing numbers are not SLAs. If you are buying the human-handoff loop, demand contractual response and resolution commitments, and a data-processing agreement.

Every item on that list corresponds to a primitive Cloudflare named on April 14. None of them is fully closed. That is the honest shape of enterprise MCP in April 2026: the tooling is real, the economics work, the organizational ownership is undefined, and the depth of adoption is a press-release sentence. Buyers who ask the right questions this year will get the stack they need. Buyers who do not will pay the operations tax in quarter three.

This analysis synthesizes Cloudflare — Scaling MCP adoption (April 2026), Code Mode: give agents an entire API in 1,000 tokens (February 2026), Project Think (April 2026), Browser Run for AI Agents (April 2026), Humwork A2P marketplace (April 2026), MCP is Now Enterprise Infrastructure (April 2026), MCP governance landscape early 2026 (April 2026), and Cloudflare One MCP portal documentation.

Victorino Group helps enterprises evaluate and deploy agent infrastructure with governance controls baked in. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation