- Home

- The Thinking Wire

- Running AI Agents at Scale: What Stripe, Cloudflare, and Istio Teams Actually Do

Running AI Agents at Scale: What Stripe, Cloudflare, and Istio Teams Actually Do

Stripe merges 1,300 agent-generated pull requests per week. Cloudflare cut agent token waste by 98% with a single HTTP standard. Istio users are repurposing their service mesh as agent validation infrastructure. And a growing number of teams are running what one developer calls a “Night Shift” — agents working autonomously while humans sleep.

None of these are demos. They are operational patterns emerging independently at companies that have moved past the “should we use AI agents” question and into the harder one: how do you actually run them at scale without drowning in the operational consequences?

The answer, across all four cases, is infrastructure that most organizations have not built yet.

The Validation Bottleneck Is Now the Binding Constraint

CircleCI’s 2026 State of Software Delivery report measured 28 million workflows. The finding that should concern anyone scaling agents: median main branch throughput declined 6.8% year over year. Success rates hit 70.8%, a five-year low. Feature branch throughput grew 15.2%, meaning teams are writing more code than ever — they just cannot land it.

As we examined in From In-the-Loop to On-the-Loop, the top 5% of teams nearly doubled their throughput while the other 95% stalled or regressed. The gap is widening.

The New Stack captured the dynamic precisely: “The pipeline is choking on its own success.” Agents generate code faster than infrastructure can validate it. Teams throttle their agents — not because the agents are bad, but because the staging environment cannot absorb the volume. Developers fall back to unit tests and mocks. Code passes localized tests and breaks in the broader system.

The binding constraint on agent productivity is no longer code generation. It is validation infrastructure.

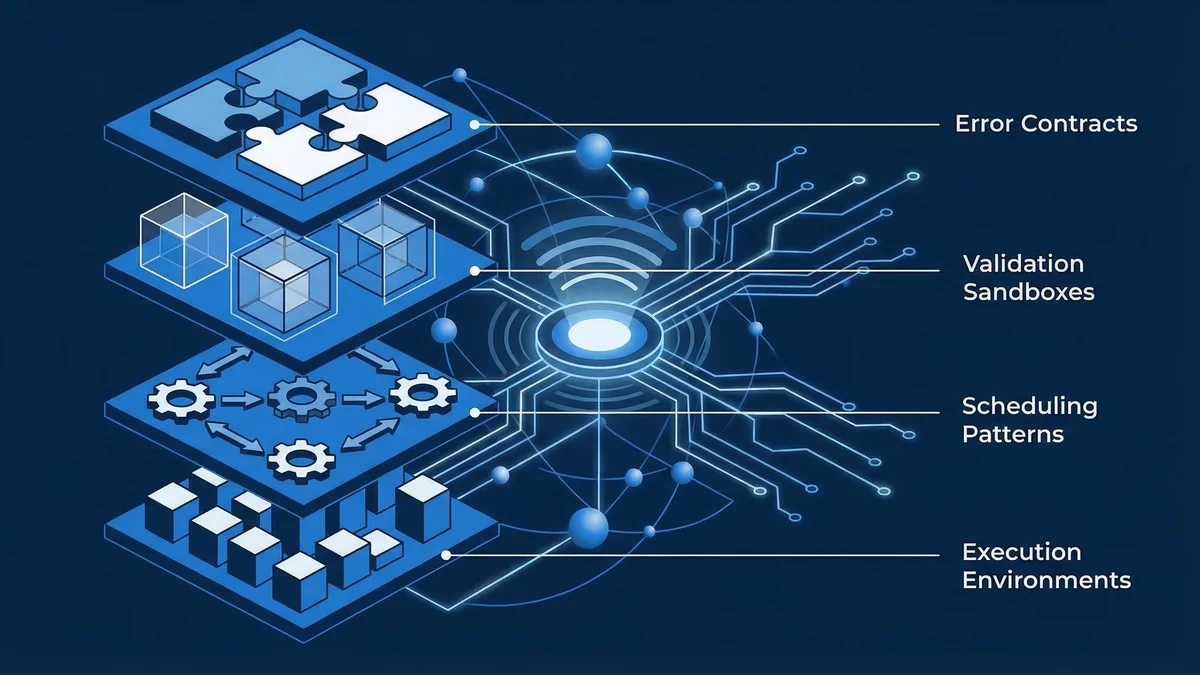

Four Operational Primitives

The companies ahead are building four categories of infrastructure that most teams have not considered. These are not theoretical frameworks. They are specific engineering patterns with measured results.

1. Agent Execution Environments

Stripe’s DevBox architecture is the most documented example. Each agent task runs in an isolated EC2 instance that mirrors a developer’s full environment: pre-warmed pools, complete codebase, cached dependencies, full QA setup. Boot time under ten seconds. The instance is destroyed after the task completes.

The design philosophy, as we covered in Stripe’s Agentic Layer, is worth repeating: “What’s good for humans is good for agents.” If a developer needs a full environment with the right dependencies, database access, and test infrastructure, so does an agent. The agent should not operate in a degraded environment just because it is not human.

Stripe engineers run half a dozen DevBoxes simultaneously. Each hosts an independent agent on a separate task. The human reviews results, triages failures, and defines the next batch. This is the engineer-as-director operating model that is replacing engineer-as-writer.

What ByteByteGo’s analysis adds is the feedback architecture. Stripe limits agents to one CI retry. A task that fails twice returns to a human. “Knowing when to stop is as important as knowing how to start.” This cap is a governance decision masquerading as an efficiency decision. Uncapped retries let agents burn compute on problems they cannot solve. Capped retries force escalation.

2. Machine-Readable Error Contracts

When an AI agent hits a Cloudflare error page, it used to receive 46,645 bytes of HTML — navigation bars, CSS, human-readable copy, footer links. That is 14,252 tokens consumed before the agent can even parse what went wrong.

Cloudflare’s implementation of RFC 9457 (“Problem Details for HTTP APIs”) replaces that HTML with structured responses. When an agent sends Accept: text/markdown or application/problem+json, it gets back a machine-readable error:

- Markdown: 798 bytes, 221 tokens. YAML frontmatter with error code, retryability, retry delay, and actionable guidance.

- JSON: 970 bytes, 256 tokens. Standard RFC 9457 fields plus operational extensions.

The reduction is 98% in both payload and tokens. For an agent encountering multiple errors per workflow, the savings compound directly into lower inference costs.

But the efficiency gain is not the interesting part. The interesting part is two fields: retryable (boolean) and owner_action_required (boolean). These transform error handling from inference to lookup. The agent does not need to interpret an HTML page to decide whether to retry. It reads a boolean. Rate-limited? Wait the specified duration and retry. Access denied? Stop and escalate. DNS failure? Report and move on.

This is deterministic governance embedded in the error contract. As we discussed in The Operations Tax, the token cost of protocol overhead is the cost of structured contracts between agents and infrastructure. Cloudflare’s contribution is showing that the same principle applies to error handling — and that the existing HTTP standards already support it.

Ten error categories. Ten prescribed agent behaviors. Network-wide, no per-site configuration. The infrastructure teaches agents how to fail gracefully at scale.

3. Ephemeral Validation Environments

The Istio pattern addresses the validation bottleneck directly. When you are processing hundreds or thousands of agent-generated PRs per day, duplicating your entire Kubernetes cluster for each one is not viable. Full cluster duplication takes 15 minutes or more per environment. At 1,000 PRs per day, the infrastructure costs explode.

The alternative: ephemeral environments that deploy only the changed microservices as a lightweight sandbox. Heavy databases and stable downstream services are shared from a baseline environment. The service mesh dynamically routes test traffic between the sandbox and the baseline using header-based routing.

If you already run Istio, you already have the traffic routing capability. The service mesh intercepts requests with specific headers and routes them to sandbox versions of changed services. OpenTelemetry baggage propagation ensures routing context travels through deep microservice call chains.

The result: high-fidelity runtime validation — testing against real, live dependencies — with the concurrency and speed that agent workflows demand. Agents can validate their code, get instant feedback, and iterate without contention with other agents working in parallel.

This pattern matters because it solves the problem that causes teams to throttle their agents. When validation is fast and cheap, you stop rationing it. The agent throughput ceiling lifts.

4. Autonomous Scheduling Patterns

Jamon Holmgren has been running what he calls a “Night Shift” since December 2025: a 17-step autonomous agent workflow that executes overnight while the developer sleeps.

The workflow is explicit about what humans do and what agents do. Humans write specifications during the day. Agents execute a structured loop overnight: task selection, spec analysis, test planning, test writing, six-persona review, implementation, quality validation, regression testing, final review, changelog, and commit. The human reviews results the next morning.

The 5x productivity improvement Holmgren reports is anecdotal — one developer, one project. But the pattern is not unique to him. The structural insight is that agent work and human work have different cost profiles and should not be interleaved on the same clock.

Two operational details are worth noting. First, maximum strictness in type checking, linting, and compilation. Holmgren reversed his previous position against strict tooling: “For agents, I want the most strictness possible.” Strict tooling gives agents clear, deterministic feedback signals. Lax tooling gives agents ambiguous signals that require judgment the agent does not have.

Second, root cause analysis on every agent misbehavior. When an agent makes a mistake, the response is not to fix the mistake. It is to identify why the agent had insufficient context to avoid the mistake, and then fix the documentation or workflow that caused the gap. Each incident becomes an investment in future quality. “I can’t paper over docs or workflow imperfections by steering it by hand. I must improve every day, so the next morning isn’t spent cleaning up a mess.”

This is the agent operations paradox in practice: more agents create more operational work, unless you invest in the infrastructure that makes agent output trustworthy without manual review.

The Pattern Across All Four

Strip away the specifics and the same pattern appears in every case.

The companies running agents at scale are not building better agents. They are building infrastructure that makes agent output verifiable without human review of every artifact. Stripe builds execution environments with deterministic checkpoints. Cloudflare builds error contracts with machine-readable retry logic. Istio users build validation sandboxes with real-dependency testing. Night Shift practitioners build specification-review-test loops with six-persona quality gates.

The common thread is determinism. Every pattern described here adds deterministic checkpoints to the agent workflow. Boolean retryability flags. CI pass/fail gates. Type checker verdicts. Test suite results. These are not AI decisions. They are infrastructure decisions that constrain what the AI can do, making the remaining output trustworthy.

As we have covered in The Verification Tax, verification does not scale by adding humans. It scales by encoding human judgment into automated systems. The four primitives described here are specific implementations of that principle.

What This Means for Your Organization

Audit your validation infrastructure before scaling your agents. If your CI pipeline takes 30 minutes and your staging environment is shared across teams, you will hit the validation bottleneck before you hit any model capability limitation. The Istio pattern — ephemeral environments with shared baselines — is the clearest path for organizations running microservices.

Implement machine-readable error contracts. RFC 9457 is not new. It has been an IETF standard since 2024. If your internal APIs return HTML error pages to agents, you are burning tokens on parsing and losing deterministic error handling. The Cloudflare implementation is a reference pattern.

Cap your retry loops. Stripe caps at one retry. Holmgren’s Night Shift has explicit stop conditions. Uncapped retries are the most common governance failure in agent deployments. They waste compute and mask problems that require human judgment.

Separate human-clock work from agent-clock work. Specifications, architecture decisions, and requirement gathering are human-clock activities. Test execution, code generation, linting, and regression testing are agent-clock activities. The Night Shift pattern is the most explicit version of this, but the principle applies at any scale.

The infrastructure gap between teams that can run agents at production scale and teams that cannot is widening with every passing quarter. The gap is not about models. It is about the four categories of operational infrastructure described here. The teams building that infrastructure now are the ones whose agents will compound productivity. The teams waiting are the ones whose agents will compound technical debt.

This analysis synthesizes ByteByteGo on Stripe Minions (March 2026), Jamon Holmgren’s Night Shift workflow (March 2026), The New Stack on Istio and agent validation (February 2026), Cloudflare on RFC 9457 agent error pages (March 2026), and CircleCI 2026 State of Software Delivery (January 2026).

Victorino Group helps engineering organizations build the operational infrastructure that makes AI agents reliable at production scale — execution environments, validation pipelines, error contracts, and governance systems. Reach out at contact@victorinollc.com or visit www.victorinollc.com.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation