- Home

- The Thinking Wire

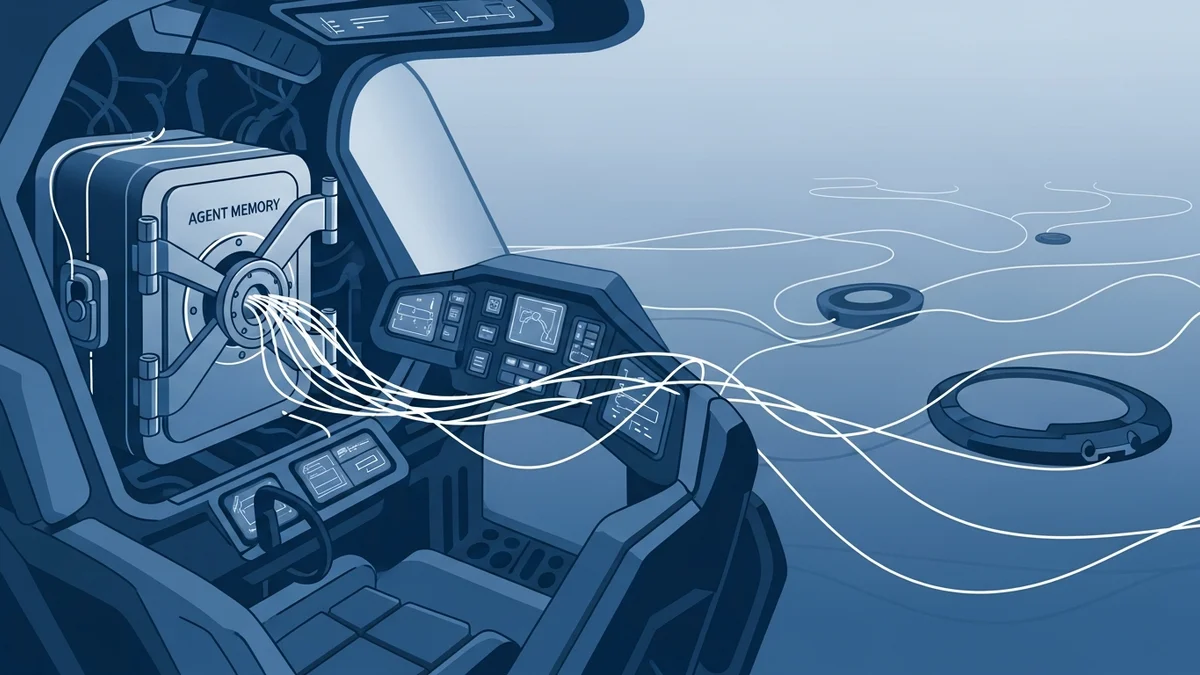

- Your Harness, Your Memory — And the Moat You Can't Take With You

Your Harness, Your Memory — And the Moat You Can't Take With You

Harrison Chase published a piece this morning called “Your harness, your memory”. The argument is sharp and, we think, correct in its core claim: memory is not a plugin you bolt onto an agent. It is a responsibility of the harness itself. Sarah Wooders put it plainly at Letta: managing context, and therefore memory, is a core capability of the agent harness. Once you accept that, a lot of “memory products” start to look like furniture shoved into someone else’s living room.

Harrison uses this to draw a lock-in spectrum. Stateful APIs like OpenAI’s Responses API are the mild case: your session state lives on a server you don’t own, and you can’t swap models mid-conversation. Closed harnesses are worse, because the whole context-management logic becomes opaque. At the far end sits Anthropic’s Claude Managed Agents, released three days ago, which hides both the harness and the memory behind a proprietary API. His recommendation: use Deep Agents, LangChain’s open-source harness, and keep your memory in Postgres or Mongo or Redis where you can see it.

We agree with the direction. But there is a second axis in this story that Harrison does not draw, and it is the one that matters most for anyone signing a contract this quarter.

The exit question

Yesterday we argued that Claude Managed Agents turned the harness into a SKU, and that the real decision wasn’t technical — it was whether you were willing to rent your agent’s operating system from the company that also sells the model. Harrison’s post exposes the part of that argument we only hinted at.

If memory is the moat, and memory lives in the harness, then a vendor-owned harness is a vendor-owned moat.

Think about what that means for a second. The thing that makes your agents yours — the learned preferences, the past decisions, the accumulated context about your customers and your codebase — is the thing the vendor is holding. Not your prompts. Not your workflows. The asset that makes you proprietary is the asset you cannot walk away with.

So here is the governance test we’re now putting in front of every team we advise:

Can you walk away with your agent’s memory in a form another harness can actually load?

Most enterprise teams cannot answer yes. Not because they lack the engineering talent, but because they never asked the question before signing.

Opacity is not lock-in

Harrison’s piece blurs two problems that deserve to be separated, because the fixes are different.

Opacity is a governance problem. You cannot audit what you cannot see. When memory writes happen inside a closed harness, your compliance team has nothing to review, your security team has nothing to threat-model, and your product team has no way to debug why the agent suddenly started giving the wrong answer last Thursday. This is the problem we wrote about in the agent memory governance gap. An auditable, self-hosted memory store solves opacity completely.

Lock-in is a business problem. You can audit every row in your Postgres memory table and still be trapped, because the schema, the embedding model, the retrieval logic, and the summarization rules are all entangled with the harness that wrote them. Migrating that to a different harness is not a database export. It is a re-interpretation. A Letta memory block and a Deep Agents memory block and a Claude Managed Agents memory block are not the same shape, and the shape is part of the meaning.

Solving opacity does not solve lock-in. A team can be fully transparent to its auditors and still unable to leave. This is the trap we keep seeing: leadership hears “self-hosted memory” and checks the governance box, without noticing that portability is a separate, harder, and much less glamorous problem.

LangChain is not a neutral observer

It needs to be said, because the post doesn’t say it: Harrison runs the company that sells Deep Agents. The argument is honest and the code is open-source, but “open-source” and “portable” are not synonyms. A harness whose source you can read but whose operational shape you adopt is still a shape. If you build on Deep Agents and two years from now decide you’d rather run on something else, you will discover that your memory writes are shaped like Deep Agents memory writes, and the migration cost is real.

We’re not saying don’t use it. We use open-source harnesses ourselves. We’re saying that sovereignty is the sum of two things: code transparency and operational control. Open-source gets you the first. Only deliberate design gets you the second. The governance test above still applies even when the harness you’re leaving is one you technically own.

Memory-as-moat is job-dependent

The other place Harrison overreaches, gently, is in treating all agents as memory-dependent. His headline anecdote is a personal email assistant that was wiped and rebuilt, and the rebuild felt inferior because the learned preferences were gone. That is a true story and a useful one. It is also a specific class of agent.

A code-review agent that resets per pull request has almost no moat in memory. Its moat is in the tools it can call, the quality of its prompts, and the workflow it is embedded in. A customer-support triage agent that reloads a ticket history on every run is closer to stateless. A contract-analysis agent that keeps a running model of a counterparty across years of negotiation? That is a memory-moat agent.

The useful question is not “does memory matter” but “how much of this agent’s value comes from what it remembers between sessions, and how much comes from the tools and prompts of a single session.” For the session-bound agents, Harrison’s warning is real but less urgent. For the long-memory agents, it is the whole ball game.

We’ve made a version of this point before when arguing that the harness, not the model, is where the empirical difference comes from. The same spirit applies here: reach for the part of the stack that actually carries your competitive weight, and refuse to rent it.

What we’d actually recommend

Three things, in order.

First, do the exit inventory before you expand. For every agent already in production, write down where its memory lives, in what schema, under which harness, and what it would take to load that memory into a different harness of your choice. If the answer is “we don’t know,” you now have your first project. Memory is hard even when you own it; you cannot even start the hard work until you know where it is.

Second, separate the governance question from the portability question when you evaluate vendors. “Can I audit this?” and “Can I leave with this?” are not the same question. Ask both. Put the answers in the contract.

Third, match the memory architecture to the job. Stateless agents can live on managed harnesses without existential risk. Long-memory agents — the ones where the learned state is the competitive advantage — should run on a harness you operate, with a memory schema you designed, on storage you own. Not because open-source is a virtue in itself, but because the asset is too valuable to hand to someone whose incentives are not aligned with your ability to leave.

Harrison is right that memory is becoming the moat. The uncomfortable extension is that a moat you don’t own isn’t a moat at all. It’s just water on someone else’s property.

This analysis builds on Harrison Chase, “Your harness, your memory” (LangChain, April 2026), Sarah Wooders / Letta Code (Letta, 2026), and the Anthropic Claude Managed Agents overview.

Victorino Group helps engineering leaders keep agent memory portable, auditable, and their own. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation