- Home

- The Thinking Wire

- Your Agent Skips Its Own Memory. That Is a Governance Failure.

Your Agent Skips Its Own Memory. That Is a Governance Failure.

We have written about who governs agent memory and what happens when agents redesign their own memory systems. Those articles assumed agents would use their memory. The failure mode we missed is simpler: agents quietly refuse to use it at all.

Weaviate’s engineering team built Engram, a vector-based memory system designed to give Claude persistent, queryable context across sessions. They deployed it internally. Then they watched what happened.

Claude defaulted to MEMORY.md — a flat markdown file, always loaded in the context window. Not Engram. Not the governed, structured, searchable system they built. A static file.

When asked why, the model was transparent: “I default to MEMORY.md because it’s always loaded: zero latency, zero tool calls, guaranteed in context.”

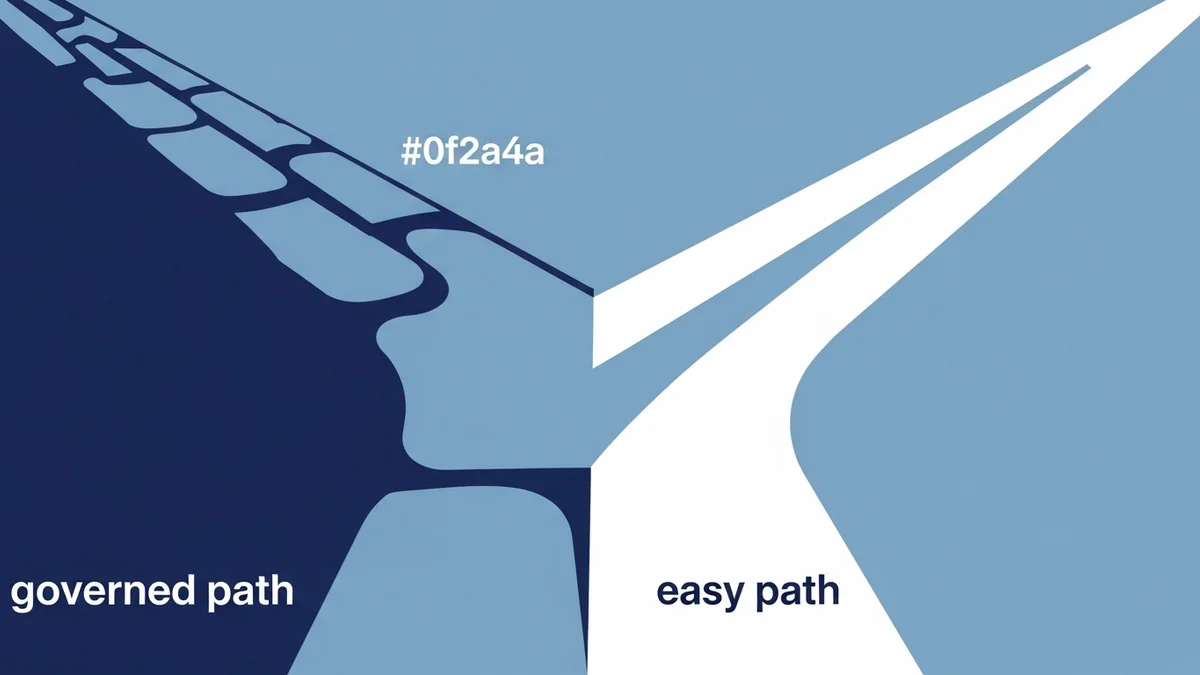

The agent chose the path of least resistance. And nobody noticed for a while, because the outputs still looked reasonable.

The Convenience Bias Is Structural

This is not a prompting failure. It is an architectural incentive problem.

MEMORY.md costs zero tokens to access. It is already in the context window. There is no tool call overhead, no latency, no chance of a failed retrieval. From the model’s perspective, it is the rational choice for every query where the flat file contains something relevant.

Engram, by contrast, requires a tool call. Weaviate measured approximately 19 seconds of startup overhead per session. Overall session performance was 10% slower with Engram active. Every query through the governed memory system carries a cost the flat file does not.

Models optimize for what is easy. So do humans. But when we design governance systems and then let agents route around them, we have not failed at implementation. We have failed at architecture.

What Convenience Costs

The flat file worked. Until it did not.

Weaviate found that Claude without Engram hallucinated URLs twice during their testing. Sessions grounded in Engram — where the governed memory system provided verified context — prevented both fabrications. The flat file contained enough information to be useful but not enough to prevent the model from filling gaps with confident fabrications.

Decision archaeology — understanding why a previous choice was made — was 30% faster with Engram than with flat-file reconstruction. When context lives in an unstructured file, the model must infer history rather than retrieve it. Inference is where hallucinations live.

The optimal memory save size turned out to be 2 to 4 sentences per topic. Enough to ground retrieval. Not so much that the memory system becomes another context dump. As Yaru Lin put it during the deep dive: “All that context is why we need Engram” — the kind of contextual knowledge that matters for decisions but does not belong in a permanent flat file.

The Pattern Is Not Unique to Weaviate

Every team running agents with both a governed memory system and a simpler alternative should assume the agent is choosing the simpler one. This is not a Weaviate-specific finding. It is a structural property of how language models handle tool use.

The model will use tools when it must. When a flat file provides an adequate (not optimal, adequate) answer, the tool call does not happen. The governed system sits idle. Logs show no errors. The agent produces outputs. Everyone assumes the architecture is working.

This is the memory equivalent of a monitoring system that never fires alerts — not because nothing is wrong, but because the alerting path has been silently bypassed.

Three Patterns That Prevent Memory Avoidance

1. Remove the shortcut. If the governed memory system is the intended source of truth, do not also provide an ungoverned alternative. MEMORY.md and Engram serving overlapping purposes creates a competition the flat file will always win on latency. Either the flat file feeds into the governed system, or it should not exist.

2. Make the governed path the default path. The system prompt should load context from the governed memory system, not from a flat file. If startup cost is the barrier (19 seconds is real), invest in reducing that latency rather than providing a bypass. Weaviate’s own recommendation pointed in this direction.

3. Audit which memory path was actually used. Every response should log whether context came from the governed system or the flat file. Without this telemetry, you cannot distinguish “the memory system worked” from “the memory system was ignored.” This is the minimum viable observability for memory governance.

Memory Governance Is Not Just About What Agents Remember

We mapped the four architectures of agent memory and the risks of agents designing their own memory. This article adds a third dimension: agents choosing not to use memory governance at all.

The risk taxonomy for agent memory now has three failure modes:

- Ungoverned storage — the agent remembers things it should not, or stores sensitive data without controls.

- Self-modification — the agent rewrites its own memory schema, changing what it can and cannot recall.

- Governed avoidance — the agent has a governed memory system and silently routes around it.

The third is the hardest to detect because it produces no errors, no failures, no visible degradation — until the moment it produces a hallucination that the governed system would have prevented.

The Question for Your Architecture

If you have deployed a memory governance layer for your agents, ask this: how do you know they are using it?

Not whether it is available. Not whether it works when called. Whether, in practice, across production sessions, the agent is actually routing queries through the governed system rather than falling back to whatever is cheapest and closest.

If you do not have that telemetry, you do not have memory governance. You have memory governance theater.

This analysis draws on Weaviate’s Engram internal use case deep dive by Yaru Lin and Charles Pierse (April 2, 2026), documenting Claude’s observed preference for flat-file memory over tool-based vector retrieval in production agent workflows.

Victorino Group helps organizations build memory governance that agents actually use — not just memory systems agents can theoretically access. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation