- Home

- The Thinking Wire

- Anthropic Runs at One Nine. Meta's Answer Is Skills as the Throughput Substrate

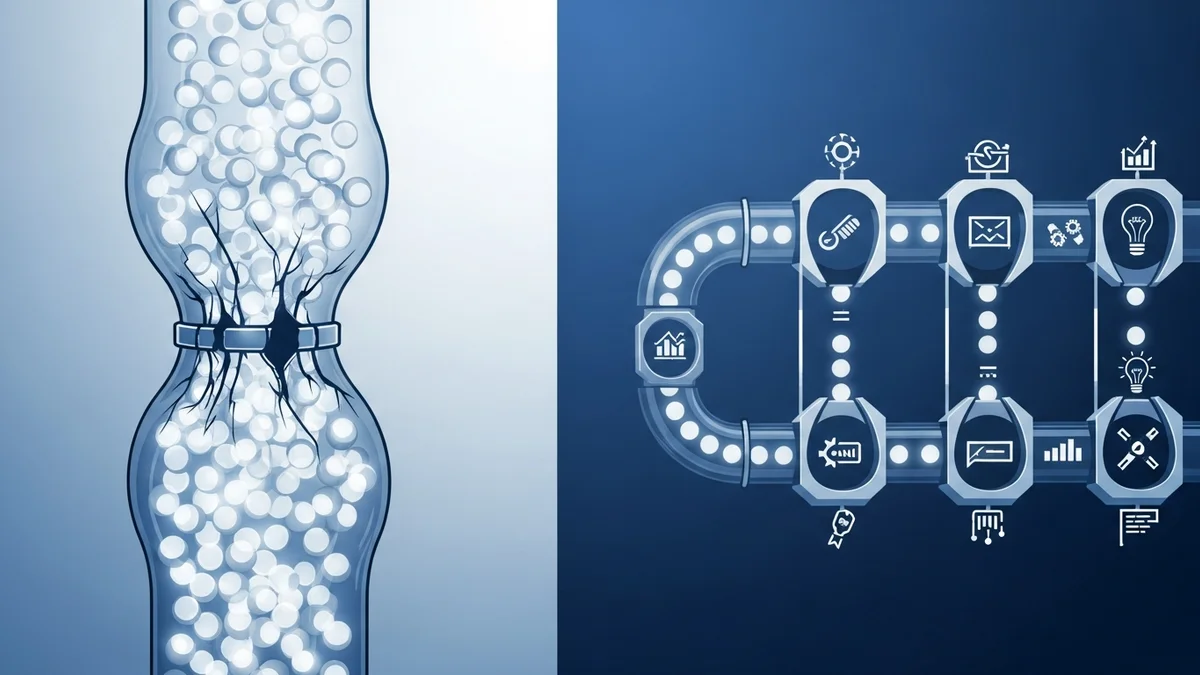

Anthropic Runs at One Nine. Meta's Answer Is Skills as the Throughput Substrate

On April 28, 2026, Anthropic had another outage. Roughly an hour and a half. In his post-mortem comment thread, Lorin Hochstein flagged the math that should make every platform leader pause: Claude Code availability is now sitting at “one nine over the past 60 days” and “two nines over 90 days.” For a service that engineering teams have wired into their daily critical path, that is the kind of number you usually only see on a status page from a regional ISP in a thunderstorm.

The instinct, when an LLM provider misses its uptime, is to treat it as a vendor problem. File a ticket. Demand more capacity. Wait for the next post-mortem. But Hochstein’s framing in Life Comes At You Fast cuts the other way. He argues, plainly, that “LLMs are more likely to be on the supply side when it comes to risk of saturation.” They are not the relief valve. They are the firehose.

That is the line we want to sit with. Because the same week Anthropic was issuing apologies, Meta’s engineering team was publishing the architectural answer. And it is not an answer about more compute. It is an answer about how senior expertise gets encoded so the system can keep running when the load curve outpaces the people curve.

The Saturation Thesis

The naive model of AI in production assumes the model is a productivity multiplier on a fixed throughput baseline. Pour agents into the pipeline and watch the same workflows complete faster. The actual operating reality, as Hochstein points out, is the opposite. Agents generate PRs. Agents generate commits. Agents generate review requests. Agents generate test runs. GitHub queues are deepening, not because the platform got slower, but because the upstream supply of work expanded faster than the downstream capacity to absorb it.

We have written about this dynamic before, when the pipeline was still merely choking on its own success. Six weeks later, the choking is less metaphor and more measurement. CI queues lengthen. Validation slots get rationed. Inference providers themselves miss their nines. The supply side is the AI. The constraint is everywhere downstream.

This is the sentence that matters: a system whose binding constraint shifts from human effort to validation throughput cannot be governed with policies designed for human-paced work. The artifacts arrive too quickly. Reviewers cannot keep up. The escalation queue is the new technical debt.

What Meta Actually Built

Meta’s Capacity Efficiency at Meta post is, on the surface, a technology announcement. They unified their internal performance and capacity tooling under an AI agent platform. There is an AI Regression Solver. There is FBDetect, which catches “thousands of regressions weekly.” There is a fix-forward routing system that sends automatically generated PRs to the original root-cause author. There are recovered “hundreds of megawatts of power.”

Read it once and it sounds like another hyperscaler bragging about hyperscaling. Read it twice and the actual blueprint comes through. Meta did not solve their saturation problem by hiring more performance engineers or by buying more datacenters. They solved it by separating two layers that most organizations still mix together.

The first layer is MCP tools. In Meta’s framing, each tool does one thing: query a metric, fetch a profile, look up a service owner. A tool is a verb in a finite, deterministic vocabulary. The agent does not decide what a tool means. The tool’s contract decides.

The second layer is skills. And this is where the framing earns its keep. Meta describes skills as encoded senior-engineer reasoning patterns. A skill is the workflow a tenured performance engineer would walk through when triaging a regression. Pull this metric first. If it crossed this threshold, pull that profile. If the profile shows this signature, check those callers. If the callers include this owner, file fix-forward in their queue. The skill is not the LLM’s improvisation. It is the senior engineer’s playbook, written down once, executed everywhere.

The AI Regression Solver compresses what used to be ten hours of manual investigation into roughly thirty minutes. That is not a model capability. That is a skill capability. The model is the engine. The skill is the route.

Why Skills Are the Substrate

We have argued before that skills are the right unit of modular AI governance. At the time, the argument was largely about safety and consistency: encode the constraint once, enforce it everywhere, do not let every team reinvent the same approval boundary. Meta’s deployment turns the same architecture into a throughput argument.

When senior expertise lives in heads, the only way to scale a triage workflow is to hire more senior engineers. When senior expertise lives in skills, the same workflow scales with compute. Meta’s FBDetect catches thousands of regressions weekly. There is no version of that headcount math that works. There is a version of the skill math that works, and it is the one in production.

This is the reframe that changes how a platform team should think about its 2026 roadmap. The conversation about agents is no longer “should we let an agent touch X.” The conversation is “which of our senior engineers’ decision patterns are still trapped in their heads, and how fast can we move them into skills before the supply side overwhelms the review side.”

A few practical implications follow.

Skills are infrastructure, not content. They live next to your CI pipelines, your service catalog, your incident runbooks. They get versioned, reviewed, and tested the way deployment configuration gets versioned, reviewed, and tested. A skill that nobody has audited in six months is a regression vector, not a feature.

MCP and skills are not the same layer. Tools are deterministic verbs with stable contracts. Skills are reasoning patterns that compose those verbs. Mixing the two, letting a tool encode workflow logic, or letting a skill bypass the tool contract, collapses the architecture and reintroduces the unbounded improvisation skills exist to remove.

The fix-forward pattern is the governance lesson. Meta’s agent does not just detect a regression. It generates a corrective PR and routes it to the engineer whose change caused the regression. Detection without routing is a backlog. Routing without ownership is a queue. Skills that close the loop, find, fix, send to the accountable human, are the only ones that translate compute into recovered capacity.

The Question for Platform Teams

Anthropic’s one-nine quarter is not an indictment of Anthropic. It is a leading indicator of where every AI-dependent platform is heading if the supply-side pressure continues to outpace the substrate that turns model output into trustworthy work. You can wait for your provider to add nines, or you can build the substrate that makes those nines matter less.

The substrate is skills. Encoded senior expertise, versioned like infrastructure, executed by agents that hold deterministic tool contracts and route their outputs to accountable owners. That is the architecture Meta described in April. That is the architecture the saturation curve is going to demand of everyone else within the next two quarters.

The choice is not whether to adopt agents. The choice is whether the senior expertise in your organization stays trapped in calendars and Slack threads, or moves into the skill layer where it can absorb the throughput your agents are about to throw at it.

Skills are not optional infrastructure. At hyperscale, they are the only way the lights stay on.

This analysis synthesizes Life Comes At You Fast (Lorin Hochstein / Adaptive Capacity Labs, April 2026) and Capacity Efficiency at Meta, How Unified AI Agents Optimize Performance at Hyperscale (Meta Engineering, April 2026).

Victorino Group helps platform teams encode senior expertise as skills before saturation breaks the pipeline. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation