- Home

- The Thinking Wire

- Anthropic and OpenAI Just Became Consulting Firms. Anthropic Already Owns Your Runtime.

Anthropic and OpenAI Just Became Consulting Firms. Anthropic Already Owns Your Runtime.

In a single week of May 2026, two foundation labs stopped pretending they were API companies.

Anthropic announced a $1.5B forward-deployed-engineer joint venture with Hellman and Friedman and Goldman Sachs. Blackstone, Hellman and Friedman, and Goldman put in $300M each. Apollo Global Management, General Atlantic, GIC, Leonard Green, and Sequoia Capital filled the rest of the round. Two days later, OpenAI capitalized its Development Company at $10B, with $4B from 19 investors that include TPG, Brookfield, Advent, and Bain Capital.

Add the lines together and you have $11.5B of new balance sheet aimed at one motion. Send foundation-lab engineers into mid-market and enterprise customers, build the workflow around the model, then leave the workflow installed.

The Anthropic statement is precise about the motion. An engagement “might begin with the company’s engineering team sitting down with clinicians and IT staff to build tools that fit into the workflows that staff already use, across mid-sized companies across industries.”

That sentence describes a consulting firm. Read it twice. Forward-deployed engineering, embedded with the customer’s domain experts, building the tools that will live inside the customer’s operations. McKinsey wrote that sentence first. Accenture wrote it a thousand times. Anthropic just wrote it with a $1.5B war chest underneath.

This is not a partnership announcement. It is a category announcement.

The four-layer absorption

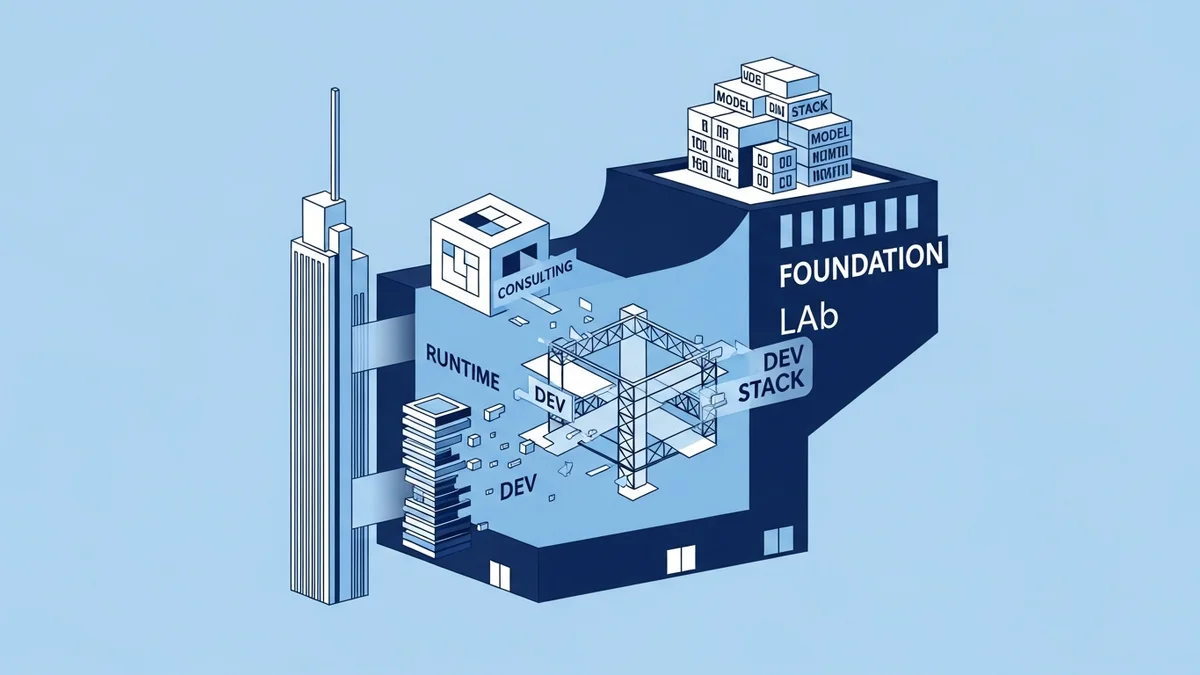

If you map what these companies now sell, the answer is no longer “the model.” It is everything between the model and the workflow.

Layer one is the model itself. The frontier weights, the tokenization, the reasoning. The thing the labs were chartered to produce.

Layer two is the runtime. Claude Code, Codex, the agent harness, the MCP plumbing. Anthropic shipped this as a product line over 2025 and it now runs on developer workstations and enterprise CI alike. We argued in Claude Managed Agents are a Harness, Not a Wrapper that the harness is the governance surface. That argument has aged into something blunter. The harness is also a procurement surface, and once you wire it into your delivery pipeline, switching costs are non-trivial.

Layer three is dev infrastructure. Bun, the JavaScript runtime and toolchain Anthropic acquired in December 2025, sits here. So do the package registries, build agents, and test runners that the labs are quietly absorbing or partnering with. The acquisition was MIT-licensed and friendly. Five months later, an Anthropic engineering postmortem cited a string of Claude Code regressions in April 2026: reduced default reasoning effort, a stale-session bug, and “a prompt change that hurt coding quality.” The author of I Am Worried About Bun reads the pattern as textbook enshittification, the slow drift where a product gets worse for users in service of monetization.

Whether you accept that read or not, the structural fact is clear. The runtime your code executes inside, the model your agents call, and the harness that wires them together now share a balance sheet.

Layer four is consulting. The forward-deployed engineers. The workflow design. The “we will sit with your IT staff” promise. This is what the new $11.5B is buying. And consulting, more than any of the other layers, is the layer that decides which of the other three you end up using. Because the consultants will not recommend a competitor’s runtime. They will recommend the one that ships under the same logo as their badge.

Stack them and you have a single vendor that sells the model, the runtime, the dev infrastructure, and the engagement that installs all three. The labs did not announce this as a strategy. They built it transaction by transaction over eighteen months, and the May joint ventures are the moment the picture became impossible to misread.

The procurement pattern is not new

Enterprise buyers have seen this movie. The Big-3 cloud providers ran the same play in the 2010s. AWS sold compute, then storage, then databases, then identity, then ML services, then a consulting arm, then partner-services certifications. By 2018, every CIO with an AWS estate had a workshop on PowerPoint titled “multi-cloud strategy” and a real estate inside AWS that no multi-cloud strategy could meaningfully shrink.

We wrote about the OpenAI consulting alliance when the first signal showed up. The framing was about governance outsourcing. The May 2026 announcements convert that into a procurement thesis: foundation labs are now selling the same expand-into-everything bundle that hyperscalers sold a decade ago, on a faster timeline, with thinner regulatory scrutiny.

McKinsey’s scaling problem sits in the same neighborhood. The traditional consulting firms cannot expand AI delivery fast enough to keep their accounts. The labs can. The labs have the model, the engineers, and now the capital. What the labs do not yet have is the institutional memory that comes from forty years of mid-market client work. They are buying that capability by hiring it directly, with $11.5B underwriting the salaries.

What Bun shows about layer three

The Bun acquisition is the proof point that the consolidation is no longer hypothetical. It is the layer where customer pain is already showing up.

The April 2026 Claude Code regressions matter not because they were unusually severe. Every shipped product has bad weeks. They matter because the engineering postmortem showed the labs are now operating their dev tools the way ad networks operate. Default reasoning effort gets reduced to save inference cost. Prompt changes get pushed without coding-quality regression tests. Stale-session bugs slip past CI because the customer harness is downstream of the model and the model team owns the schedule.

The author of I Am Worried About Bun lays out the trajectory: an open-source runtime, acquired by a model company, runs the same maintainer team for now, but lives inside an organization whose business model depends on tokens consumed and not on JavaScript-compile times. When those incentives diverge, the runtime drifts toward whatever serves the model business. The Bun maintainers have not announced any such drift. The Claude Code regressions show that drift is already happening one layer up.

Layer-three problems compound. If your build pipeline runs on Bun, your test runner uses Claude Code, and your agent infrastructure calls Anthropic models, a single business-model change at the lab becomes a single point of failure across three layers of your engineering operation.

The procurement question

We argued in The Governed Runtime Is the New Cloud-Native that runtime governance is the new infrastructure-as-code. Every governance argument we have made depends on a buyer-side assumption: that the buyer can swap providers if the controls degrade.

The May announcements squeeze that assumption. If the same vendor sells the model, the runtime, the dev tools, and the consultants who installed all three, the swap cost is not a technical exercise. It is an organizational reset.

Three questions belong on every CTO and CPO agenda this quarter.

First, how many of your AI-touching workflows now sit on infrastructure owned by the same lab? Run the inventory. Count the model contracts, the agent harnesses, the dev-tool dependencies, and the active consulting engagements. If the answer is “all four are Anthropic” or “all four are OpenAI,” you have a concentration problem the cloud era should have inoculated you against.

Second, what does your exit look like? Not in theory. In a SOW. If your forward-deployed engineers leave next quarter, what knowledge transfers, what code transfers, and what stays on the lab’s GitHub? The contracting language matters more here than the technical architecture.

Third, who owns the layer-three regressions? When Claude Code degrades and your CI starts failing, whose pager goes off, and whose budget pays for the fix? The current default is “yours.” The labs do not yet offer SLAs on developer-tool quality the way AWS offers SLAs on EC2 uptime. That is a procurement gap (note: the only one in this piece), and it will close, but not in the buyer’s favor unless the buyer pushes.

What changes for buyers this quarter

Procurement teams that learned the multi-cloud lesson should already be modeling this. The lesson was not “use three clouds at once.” The lesson was “make sure your data, your identity, and your build outputs are not held hostage by any one provider’s roadmap.” The same lesson applies, with the foundation lab playing the role AWS played in 2014.

Concretely:

- Separate the model contract from the dev-tool dependency. Run Claude on Bedrock or Vertex if you can, so that your model spend is not bound to your runtime spend.

- Treat the harness as a swappable layer. If your agent code only runs inside one vendor’s harness, you have built on a frame, not a foundation.

- Push back on consulting engagements that recommend single-vendor stacks. The forward-deployed engineer is a brilliant resource. The forward-deployed engineer is also paid by the vendor whose products will end up in the recommendation.

- Audit your dev-tool supply chain. Bun is not the last open-source runtime a foundation lab will acquire. Know which of yours sit one acquisition away from a different incentive structure.

The foundation labs did not become consulting firms by accident. They did it because consulting is the layer that decides what the customer buys at the other three layers. The labs that own all four win the decade. The buyers who notice this in May 2026 keep their optionality. The buyers who notice it in May 2027 will already be writing the same multi-vendor strategy memo, two cycles late.

The Big-3 cloud lesson cost the industry a decade of margin compression and lock-in remediation. The same lesson is on offer now, with a faster compounding rate. The price of learning it the second time should be lower. It will not be, unless procurement teams decide this quarter that “one vendor across four layers” is no longer an architecture they sign for.

This analysis synthesizes Anthropic and OpenAI Launch Enterprise AI Joint Ventures (TechCrunch, May 2026) and I Am Worried About Bun (wwj.dev, May 2026).

Victorino Group helps enterprise procurement and platform teams stress-test foundation-lab dependencies before the lock-in compounds. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation