- Home

- The Thinking Wire

- AI Industrializes Coordination. Not All of It.

A CTO writing anonymously on Substack this month described a deployment that will get misread before it gets understood.

The claim: a 40-person B2B observability company reshaped its org chart around a LangGraph agent mesh. Roughly half a dozen middle-management seats dissolved into the system. Four ECS containers. About 4,000 lines of Python. Claude Sonnet 4.6 for routine reasoning, Opus 4.6 reserved for weekly roadmap decisions. Notion and pgvector as institutional memory. The cost, in the author’s own phrasing: “less per month in tokens than one senior engineer costs per week.”

That will circulate this quarter as the headline number. It is not the interesting part.

The interesting part is the sentence the author uses to justify the automation boundary: “The worst-case failure mode is an awkward paragraph in a Notion doc nobody reads.” That test does more honest work than most governance frameworks in circulation right now. It asks a simple question. What breaks when the agent is wrong? The test also breaks under pressure, and understanding where is the point of this essay.

The piece of the anecdote that gets flattened

Before extracting a principle, the anecdote deserves to be read carefully. The author writes that six of nine managers “moved on to other things.” Two destinations are named: one to a senior engineering seat, one to a customer-facing role. The other four are not accounted for. Voluntary? Managed? Severance? We do not know. “Moved on” is a phrase that covers a wide surface.

The company is not named. The author is pseudonymous. The Substack is days old. The post has a handful of shares. None of this makes the case fabricated; it makes it unverified. It is one coherent, internally consistent story from an author with reason to tell it well.

Treat it as a provocation, not a proof. That is how it behaves in what follows.

The taxonomy that makes the thesis usable

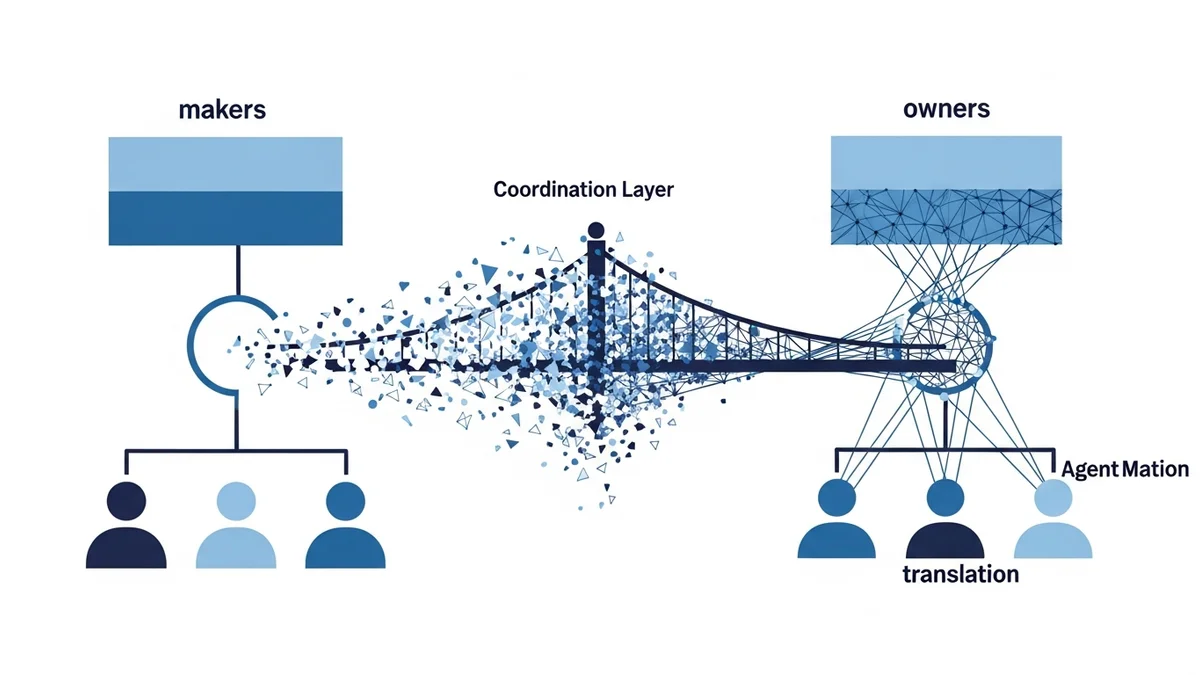

We mapped production patterns in Running AI Agents at Scale. This piece adds unit economics and an ethics caveat. The operational frame that makes the anonymous CTO’s story generalizable, and keeps it from getting misused, is a three-part split of what we call “coordination work”:

Status-and-sync coordination. Meeting notes. Standup updates. Jira grooming. Weekly roll-ups. Routing a ticket from support to the right engineer. Converting a customer email into an acceptance criterion. Updating a roadmap doc after a planning meeting. The failure mode is genuinely low-stakes: a paragraph is slightly wrong and someone fixes it, or nobody reads it. This is where the anonymous CTO’s $48K/year in tokens actually lives. This is also what Atlassian’s State of Teams 2025 measured when it found that 25% of knowledge-worker time is wasted searching for answers and 56% report that the only way to get information is to ask or meet. Asana’s multi-year Anatomy of Work research puts “work about work” at 60% of the knowledge worker’s day. That is the compressible surface.

Judgment coordination. Deciding whether a bug is a release blocker. Deciding whether a customer complaint escalates. Deciding whether a hire is a culture risk. Reconciling a design tradeoff between two seniors who disagree in good faith. The failure mode here is contested and consequential. An agent can produce a recommendation; a human must sign it. Automation assists. Automation does not decide.

Ownership coordination. Incident command. Launch-day decisions. Regulatory response. Performance reviews. Customer-blast communications. The failure mode is the business. These are not coordination tasks that happen to be important. They are roles, with accountability attached. When the system fails, a named human takes the hit.

The anonymous CTO’s case is strong evidence that the first category is compressible at a small, well-instrumented, unregulated SaaS. It is not evidence about the second or the third. The rhetoric of “AI eats middle management” collapses all three into one, and most of the bad decisions of 2026 will come from that collapse.

The counter-cases the cost math does not see

Ramp reshaped at $32B. This is the same logic at 40 people, with caveats Ramp does not have to carry. Klarna announced in 2024 that AI was handling the work of 700 customer-service agents. By mid-2025 the CEO was rehiring humans and calling customer service “a VIP thing.” The agents had absorbed ticket triage (status-and-sync work) cleanly. They had also been pushed into complaint handling and de-escalation, which is relationship work, which is judgment and ownership. Coordination compressed. Ownership snapped back.

IBM’s AskHR handles roughly 94% of employee inquiries. The 6% residue is the hardest work: sensitive, ethical, emotional. Total IBM headcount grew, not shrank, after the announced HR automation. The net story is reshuffling, not elimination.

Runframe’s State of Incident Management 2026 reports that manual toil rose 30% in 2025 across the surveyed organizations, even as AI investment deepened. The mechanism is plain enough. AI generates surface area (more code shipped, more tickets opened, more changes merged) faster than it absorbs the operational load those changes create. If the thesis were “AI eats coordination,” toil should fall. It rose.

Gartner projects that 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from under 5% in 2025. That is the direction. It is not the same as “coordination is solved.”

The case where the line blurs

Incident command is the cleanest test of the three-part taxonomy, because coordination and ownership are the same role. The incident commander assembles responders, routes information, decides who talks to the customer. AI agents are already absorbing the context-gathering and the first translation layer. Summarizing logs, proposing a probable cause, drafting a customer statement. That is real. It is also not the point.

The IC’s accountability does not transfer. Someone named writes the postmortem. Someone named answers the CEO’s question three days later. The shape that emerges when the tooling is working is: AI does coordination work, a human carries coordination role. That distinction matters because it changes who gets hired, who gets re-skilled, and who gets laid off under the banner of “we automated coordination.”

The anonymous CTO’s observability company did not have to run a payroll team, defend a regulatory filing, or manage a public-facing incident this week. A 400-person company with a compliance obligation cannot apply the “awkward paragraph in Notion” test to its audit trail. Agent-authored coordination artifacts become discovery material. The failure mode is not a sloppy paragraph; it is a subpoena.

The unit economics, honestly

At Sonnet 4.6 blended pricing, the anonymous CTO’s token spend lines up with “less than one senior engineer’s weekly loaded cost,” roughly $3K to $5K a month depending on cache-hit rates. Against six US middle-management seats at fully-loaded $200K to $250K each, the nominal ratio runs 15x to 30x.

The real math is worse by multiples. Eval infrastructure. On-call rotation for agent failures. The prompt and context-engineering work to keep the mesh honest. Switching cost when the next model ships and the current one deprecates. LangSmith or equivalent observability at $39 per seat. Regression testing. The author does not account for these, and the unit economics shift substantially when you do.

Loaded manager cost also varies 2x to 3x by market. A ratio that looks dramatic in San Francisco compresses in Lisbon or São Paulo. The story is specific. It is a profitable, six-year-old, 40-person, unregulated observability SaaS. A company whose own product makes agent-ops telemetry cheap to instrument. The generalization to a legal-services firm, a hospital, a public-sector contractor, or a bank is not a small extrapolation.

Even with a dedicated engineer supervising the mesh and reasonable infrastructure overhead, the math still works at roughly 3x in this case. That is real. It is also case-specific.

What this is not

Cloudflare showed the velocity side. This is the cost-and-coordination side. The two stories are compatible, and they are both easy to misread.

This is not a layoff-celebration essay. Six human careers changed shape in the anonymous CTO’s story. Two of the six destinations are disclosed. The other four are not. The piece does not say whether the transitions were voluntary, whether severance was paid, whether the succession and mentoring load on the remaining three managers compounded. Those questions will not answer themselves in the next eighteen months, when the coaching and knowledge-transfer debt from the flattening becomes visible and the original decision cannot be re-litigated.

This is also not an argument that coordination automation is a mistake. It is an argument that treating “coordination” as a single category is the mistake. The work to do before deploying an agent mesh is to sort the three categories (status-and-sync, judgment, ownership) for your own business. The first is safe to industrialize now. The second requires a scoreboard. The third stays human, and will stay human for reasons that are not about the state of the models.

The operating principle

Automate where the worst case is a paragraph. Protect ownership where the worst case is an incident, a filing, or a person’s job. Treat judgment coordination as a gradient with a scoreboard, not a binary. Know which category you are automating before you deploy.

The anonymous CTO’s essay will be cited by people skipping the middle two categories. That is the risk of writing operational principles in the middle of a layoff cycle. Our job is to name the categories cleanly, so the next CTO doing this work has a taxonomy to think with, not a headline to follow.

This analysis draws from “What If the Robots Came for the Org Chart?” (dontdos Substack, pseudonymous, April 2026), “Org Design in the Age of AI” (Robonomics Substack, April 2026), Chris Wardman’s “Why Bizware Is Becoming the Dominant Form of Software” (CIO.com, 2026), Klarna’s reversal on AI customer service (Entrepreneur, 2025), Gartner’s 40% enterprise-agent projection, the Atlassian State of Teams 2025 report, and Runframe’s State of Incident Management 2026.

Victorino Group helps teams draw the line between coordination that is safe to automate and ownership that must stay human, with a scoreboard for both. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation