- Home

- The Thinking Wire

- Cloudflare Just Published Its Own Case Study

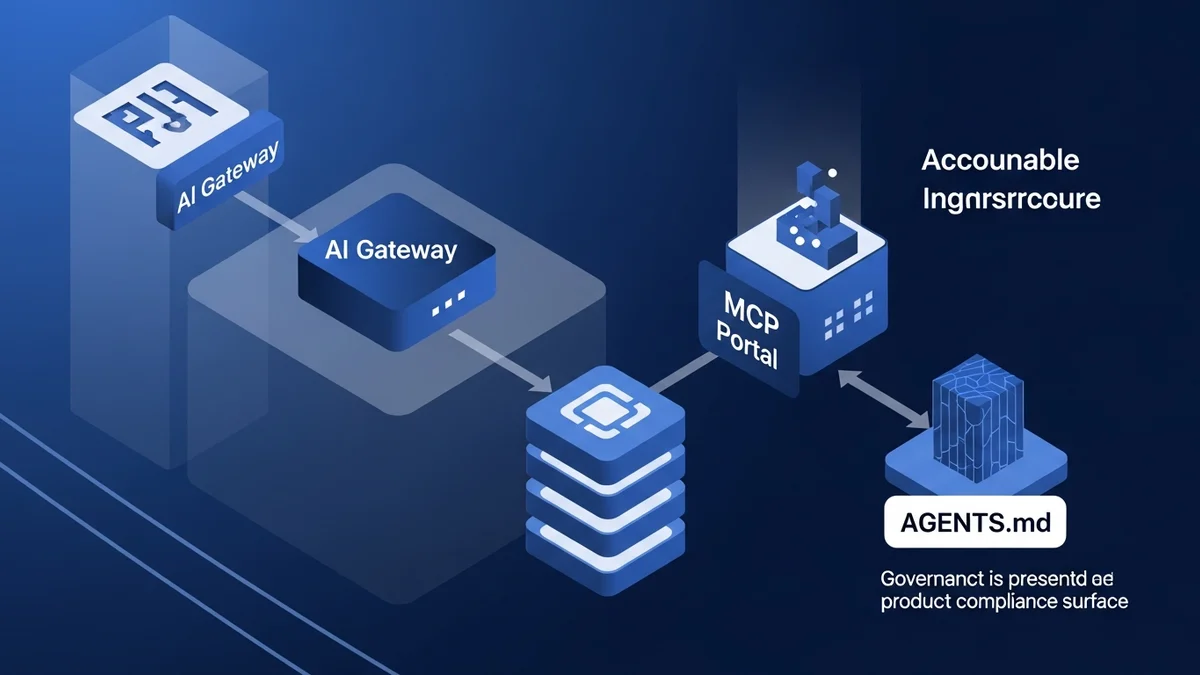

On April 20, Cloudflare published a post titled “The AI engineering stack we built internally, on the platform we ship.” Three engineers from the Developer Productivity team walked through how their own R&D organization uses AI Gateway, zero-trust access, the MCP Portal, AGENTS.md, and an AI Code Reviewer to run day-to-day engineering work.

Strip the branding and it reads like the reference architecture we have been describing for a year.

When we wrote about the four launches in one week, the argument was that Cloudflare had turned agent governance from a policy document into a product taxonomy: identity, network, cost, coordination. This new post closes the loop. The vendor shipping the taxonomy now uses the taxonomy. Publicly. With numbers.

The post is worth reading. It is also a marketing asset, published three days after Agents Week 2026 closed. Read it for the pattern. Discount the outcome claims by the obvious incentive. There is still enough signal left to matter.

What is actually new

The Cloudflare governance products are not new. The adoption data is. Five numbers stand out, and they only matter in a specific order.

Adoption first. Cloudflare reports 3,683 internal users of AI coding tools in a 30-day window. That is 60% of the company and 93% of R&D. The 93% is directionally above the Stack Overflow 2025 baseline of ~84%, not a breakthrough. It is also measured as “used at least once in 30 days,” with the R&D denominator undisclosed. The interesting number is not 93%. The interesting number is that an organization of ~6,100 people has nearly four thousand engineers routing every AI request through one control plane.

Throughput second. Merge requests went from ~5,600 per week to ~8,700, peaking at 10,952 the week of March 23. The post frames this as a velocity result. It is partly a headcount result, since Cloudflare grew ~20% year over year, and partly a PR-size result. Nobody in the post discloses PR size, revert rate, or defect-escape rate. Throughput went up. Productivity is not proven. Treat the gap accordingly.

Review volume third. The AI Code Reviewer handled 5.47 million requests and 24.77 billion tokens in the same window. Every merge request gets an automated review pass before a human sees it. This is the number most people should care about. It is also the one with no outcome data attached. No acceptance rate. No false-positive rate. No real-bug-caught rate. Volume tells us the machine is running. It does not tell us the machine is useful.

Context efficiency fourth. Cloudflare’s Code Mode collapsed ~15,000 tokens of MCP tool schemas into near-zero per request by converting them into TypeScript APIs executed in a sandbox. Good engineering, real savings, not a Cloudflare invention. Anthropic published the same mechanism in November 2025, reporting a 150K-to-2K reduction with filesystem-based MCP tool discovery. Cloudflare scaled the pattern. Anthropic framed it first.

Context files fifth. AGENTS.md in roughly 3,900 repositories, generated from an internal knowledge graph with 2,055 services, 375 teams, and 1,302 databases. This is the datapoint we were early on in Passive Context Wins. The thesis was that passive, always-present repo context outperforms active skill invocation. Cloudflare just ran that pattern at 3,900-repo scale. Worth noting: AGENTS.md is a Linux Foundation-stewarded open spec, contributed to by OpenAI, Google, Cursor, Amp, and Factory. Cloudflare is an adopter. The scaling achievement is generation throughput, not authorship.

What the post quietly concedes

Two sentences in the post do more work than the rest of the essay. The first: “A stale AGENTS.md can be worse than no file at all.” The second: the implicit one, the thing every missing metric says out loud.

The first sentence turns AGENTS.md from a README variant into a versioned control artifact. Generated files age. Enterprises that drop them into 3,900 repos and walk away will produce 3,900 liability surfaces, not 3,900 governance wins. The Cloudflare answer is to generate AGENTS.md from an Engineering Codex that cannot drift. The file is a projection of service-registry truth, not a hand-written doc. This is the pattern worth copying. The file count is not.

The second concession is what the post does not contain. No cost per merged MR. No defect-escape rate. No revert rate. No security-incident rate. No dev-satisfaction score. No acceptance rate for the reviewer. No staleness distribution for AGENTS.md. The case study evidences adoption and architecture. It does not evidence outcomes. Those are different claims.

Why it still matters

When we argued that engineering has Cloudflare and marketing has nothing, the capability point was easy and the adoption point was open. How many engineering organizations were actually routing every LLM call through an identity-aware gateway? How many had an AI reviewer on every PR? How many had standardized repo-level context? The answer was mostly unknown. The reference case studies were private.

Cloudflare just made one public. It is self-serving, but it is legible. The shape is: gateway plus catalog plus portal plus reviewer plus context files, all behind zero-trust SSO, with usage telemetry that justifies the investment. That is the pattern. It is portable. Dynamic Workers and Workers AI are not portable; those are vendor lock-in. The pattern is.

The other reason to read it: the Anthropic “antfooding” disclosure via Lenny’s Newsletter reports the same direction from a different company. 67% more merged PRs per engineer per day. Code-review comment coverage rising from 16% to 54%. Stripe’s autonomous coding agents are now merging over 1,300 PRs per week under human review. Three companies, three architectures, the same lesson: infrastructure precedes AI velocity. The Faros AI productivity paradox documents what happens without that infrastructure: +98% PRs merged and +91% review time. Cloudflare’s move was to absorb the review-time tax with automation. That is the move that makes the adoption number survivable.

The useful read for a non-Cloudflare buyer

If you are a CIO at a non-platform company reading this post as a template, make three adjustments.

Do not chase 93%. Chase the control plane. The governance is the point; the adoption number is downstream. A company with a routed gateway and 60% adoption is in better shape than a company with 93% adoption and no gateway.

Do not treat MR velocity as a success metric. Treat it as an input to the question of whether your review gate can absorb the load. The Faros data is what “no review gate” looks like. Cloudflare’s 5.47 million reviewer requests is what “review gate can scale” looks like. The delta is what you are actually buying.

Do not copy the specific products. Copy the shape. Every enterprise needs an identity-gated control plane for AI traffic, a tool registry, a portal that aggregates MCP servers, an automated review pass with a defined false-positive budget, and a repo-level context file generated from a source of truth it cannot outrun. Cloudflare’s implementation is one instance. The pattern is the artifact.

This is roughly where we have been pointing Releezy. Guardian is the scoreboard equivalent of AI Code Reviewer: every change cites the rule that governed it. Loop is the accountable-throughput lane, with MR velocity preserved because review is automated, not skipped. Reviewer tiers models by risk the way Cloudflare tiered Workers AI against frontier providers. The point here is not the product. The point is that the architecture has a name now, and it has a public customer.

The honest summary

Cloudflare published a case study written by the vendor of the stack being described, timed to a launch week. Every metric selected goes up. The metrics that might have gone down are not in the post. This is how vendor-authored case studies work, and it is legitimate as long as readers apply the obvious discount.

What survives the discount is the pattern. A large infrastructure company now operates its engineering organization on a documented, multi-layered AI governance architecture, with telemetry proving the architecture is in use. “We will figure out agent governance later” lost another quarter of defensibility on Monday. The pattern is public. The adoption data is public. The outcome data is still private, which is the part of the conversation the next twelve months will have to finish.

This analysis draws from Cloudflare’s internal AI engineering stack (April 2026), Anthropic’s Code Execution with MCP (November 2025), Anthropic’s ‘antfooding’ disclosure via Lenny’s Newsletter (2026), the Faros AI Productivity Paradox (2025), and the Stack Overflow 2025 Developer Survey.

Victorino Group helps teams build accountable AI infrastructure: the scoreboard for humans and agents. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation