- Home

- The Thinking Wire

- AI-on-AI Reverification: The Harness Lands in Incident Response

AI-on-AI Reverification: The Harness Lands in Incident Response

There is a sentence in the incident.io post on running an incident with AI SRE that is doing more work than its prose suggests. The author describes posting Claude Code’s proposed remediation back into the incident channel and then notes, almost in passing, that “everything you do in Claude and post back to the channel gets reverified by AI SRE. If you’ve made a mistake or forgotten something, it’ll nudge you about it.”

That is the pattern. Hold it.

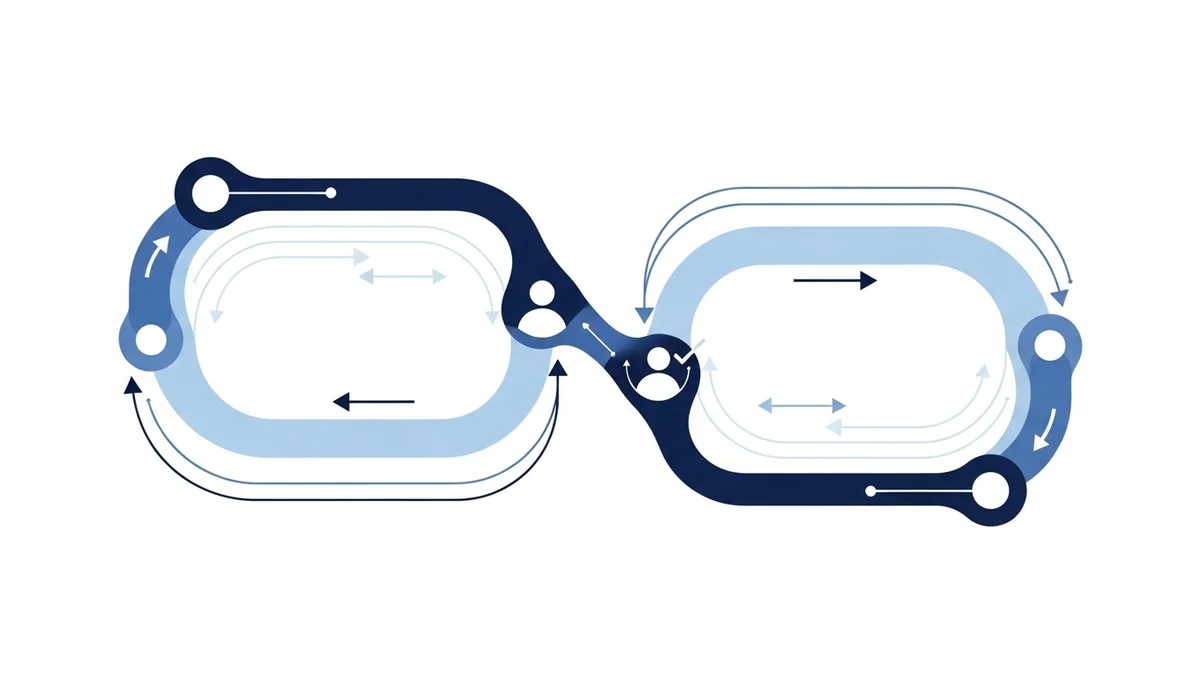

The investigator agent is checking the fixer agent. One AI is reading another AI’s output and pushing back. The human stays in the loop as the affirming party, but the verification step, which is the first thing to evaporate at 2am, is preserved by a different model than the one that wrote the fix.

We have written about the harness as the difference between agents that work and agents that drift. We have written about why monitoring agents at scale demands different instrumentation and about the four-floor containment stack the platform team needs in place. AI SRE is the first product we have seen that names the next pattern out loud: AI-on-AI reverification, shipped in incident response.

It deserves a careful read, with the caveats kept honest.

What incident.io Actually Built

The post describes a single live incident, run in roughly the following shape. Slack notifies the on-call engineer. AI SRE has already begun parallel investigation: it inspects recent deploys, telemetry, error rates, past incidents in the same surface area, the relevant code, and the Slack context of the channel. The macOS desktop app surfaces incident status in the notch — present without being intrusive. The engineer pins the incident, opens Claude Code, and runs /incident, which syncs AI SRE’s findings into the coding session. Claude Code drafts a remediation. Claude posts the proposed action back to the incident channel automatically; the engineer does not switch to Slack to narrate. AI SRE reads the post and reverifies. The PR is opened and merged. Resolution, the author writes, “took minutes” — most of which was waiting for the deploy.

Read it twice. The novel structure is not the speed and not the chat-to-PR flow. The novel structure is that the agent that investigates the incident is not the same agent that proposes the fix, and the investigator gets the last word on whether the fix matches the diagnosis.

That is a separation of concerns most production AI workflows do not have. In single-agent setups, the same model that decides what is wrong also decides what to change. Errors compound silently. The reverification loop forces an explicit handoff: the fixer’s output becomes the investigator’s input, and the investigator was already grounded in the actual telemetry, not in Claude’s narration of the telemetry.

Why This Is the Harness Pattern, Not Multi-Agent Theater

Multi-agent demos have been ambient in vendor decks for two years. Most of them are theater: agents talking to other agents in a graph, with no actual reduction in failure modes, often with more failure modes because every additional model call is another chance to hallucinate. AI-on-AI reverification looks similar from a distance and is structurally different up close.

Three properties separate it from theater.

First, the verifier is grounded in independent context. AI SRE looked at deploys, telemetry, and incident history before Claude Code wrote anything. It is not reviewing Claude’s reasoning; it is checking Claude’s output against an independently constructed ground truth. The architectural equivalent is the difference between code review by someone who read the spec and code review by someone who only read the diff.

Second, the human keeps decision authority by design. The flow is not “AI proposes, AI executes.” It is “AI proposes, AI checks, human affirms, then execute.” Claude asks the engineer “want me to commit this and open a PR?” The engineer says yes. AI SRE has already done its review by the time that yes happens. The human is not redundant; the human is the merge button.

Third, the verification is preserved when humans would have skipped it. At 2am, with revenue burning, an engineer who has just watched Claude generate a plausible patch will not run a 30-minute review. They will merge it. The reverification loop replaces the review the human cannot do at that hour with a review another agent can. That is the harness pattern’s actual job: make the discipline survive the moment when discipline is most expensive.

We have argued before that the harness is what separates “we use AI agents” from “we operate AI agents.” This is the operating shape.

The Honest Caveats

This is not a launched product. incident.io says explicitly: “We haven’t fully launched yet” — UX is still being refined. The post is a narrative from the company about its own internal experience. It is not a customer reference, not a benchmark, not a third-party report.

The “took minutes” claim is not an MTTR number. It is one anecdote, with most of the time spent waiting for a deploy pipeline. There are no documented failure modes, no edge cases discussed, no cases where AI SRE missed something Claude Code got wrong, no cases where it nudged on a non-issue and added noise. The author flags that the team is “prioritizing correctness over speed,” which is the right priority and also the answer you give when speed is not yet where you want it.

None of this disqualifies the pattern. It clarifies what is being claimed. The pattern is real and named. The product implementing it is in the late stages of being ready to sell.

What Changes for SRE and Platform Leaders

If you run incident response, three things just shifted.

You can name what you are buying. “AI-on-AI reverification” is now a phrase with a reference implementation. When a vendor pitches “AI for incidents,” the question is no longer “does it help” but “where is the verification step, and is the verifier grounded in something other than the fixer’s output?” If the answer is “the same agent investigates and proposes,” you are looking at single-agent automation, not the harness pattern. Different category, different risk profile, probably different price.

The decision-authority boundary is becoming a configurable surface. AI proposes, human affirms is the safe default. AI proposes, AI checks, human affirms is the defensible default for high-stakes operations. Fully autonomous AI proposes and executes is a third category that the market has not yet found a place for in incident response, and probably will not for two more years. Knowing which mode you are operating in, on which class of incidents, is the new policy decision.

The harness is becoming a build-versus-buy question. Internal teams have been hand-rolling reverification: a second model call that re-reads the first model’s output, perhaps with a different system prompt. AI SRE is the first product to make the verifier a separate agent grounded in independent telemetry. If the buy-side of this question is in flight in your org, this is the moment to see what shipped before you finish building.

The pattern is not finished. The pattern is named. Naming is what makes a category buyable, and once a category is buyable, the next two years of AI operations gets argued in those terms whether your team adopts them or not.

This analysis synthesizes How It Feels to Run an Incident with AI SRE (incident.io, April 2026).

Victorino Group helps SRE and platform leaders design AI-on-AI reverification patterns where one agent’s output becomes another agent’s input — without losing human decision authority. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation