- Home

- The Thinking Wire

- The Review Layer Paradox: When AI Governance Becomes the Bottleneck It Was Meant to Prevent

The Review Layer Paradox: When AI Governance Becomes the Bottleneck It Was Meant to Prevent

Avery Pennarun, CEO of Tailscale, published a piece last week that should be required reading for anyone building AI governance systems. His thesis is deceptively simple: each layer of review you add to a process makes it roughly 10x slower. Not 10% slower. Ten times slower. The delays compound exponentially, not linearly.

A bug fix takes 30 minutes to write. Add peer review and it ships in about 5 hours. Add design approval and it takes a week. Add cross-team scheduling and you are looking at 12 weeks.

Almost none of that time is spent working. Almost all of it is spent waiting.

The Math That Nobody Does

Most organizations feel this intuitively but never quantify it. Pennarun does. His 10x-per-layer model is deliberately simplified, but the underlying observation holds across every engineering organization I have worked with: review latency dominates cycle time. The actual work is a rounding error.

Consider a three-layer approval process for deploying an AI agent to production. Layer one: technical review (does the code work?). Layer two: architectural review (does it fit the system?). Layer three: business review (should we ship this?). Each layer adds a scheduling dependency. Each scheduling dependency introduces wait time. The wait time at each layer is independent of the work itself.

A pull request that takes 20 minutes to review might sit in a queue for two days waiting for reviewer availability. Multiply that by three layers and the delivery window stretches from hours to weeks. Pennarun’s contribution is putting hard numbers on this pattern.

The formula is not controversial. What follows from it is.

The Other Side of the Coin

We have argued, repeatedly and with data, that speed without governance is expensive chaos. Zach Wills’ 60 agents generating 77 overnight PRs with a 33% rejection rate. StrongDM’s $1,000-per-engineer-per-day Dark Factory. The evidence is clear: ungoverned speed produces waste.

Pennarun’s argument is the complement. Governance without speed produces paralysis. Both statements are true simultaneously. The question is where the optimal zone sits between them.

Most organizations, when confronted with AI’s speed, instinctively add review layers. The instinct makes sense. Faster output creates more risk surface. More risk surface demands more oversight. More oversight demands more approvals. Each approval layer makes perfect local sense.

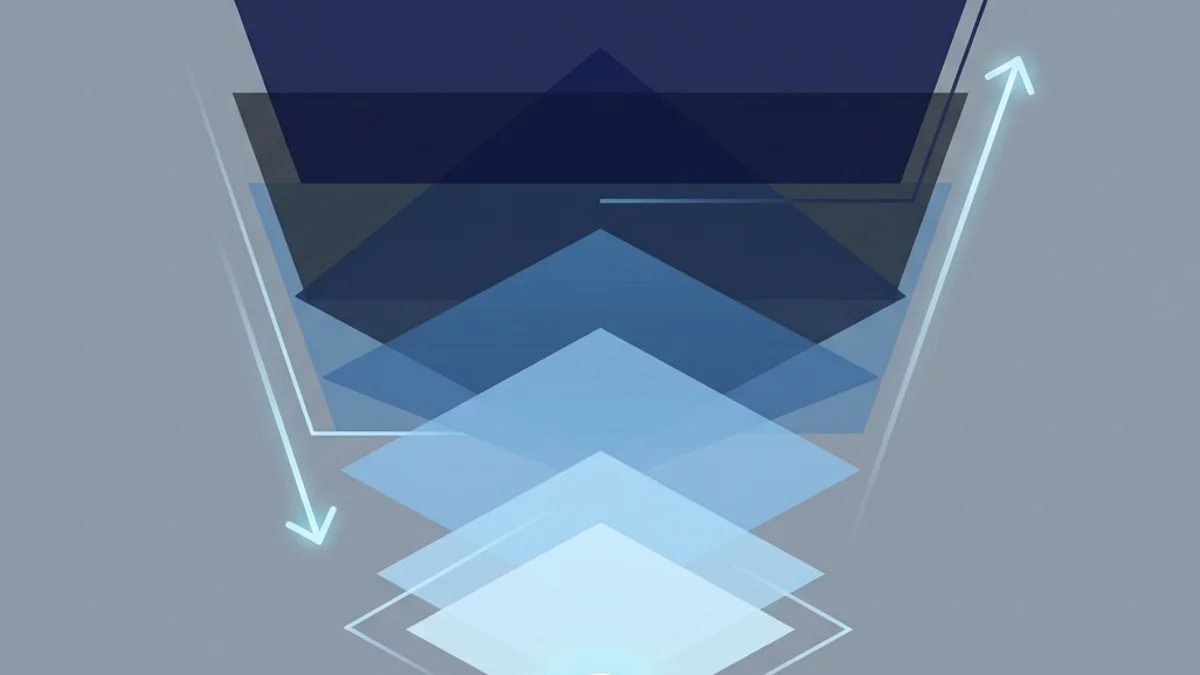

But the compounding effect means three “reasonable” review layers can turn a same-day delivery into a quarterly one. The governance system, built to prevent damage, becomes the primary source of organizational drag. This is the paradox: the controls designed to make AI safe end up making AI useless.

Deming Had the Answer Seventy Years Ago

Pennarun draws explicitly on W. Edwards Deming, and the connection is worth following. Deming spent decades arguing against inspection-based quality systems. His central insight: you cannot inspect quality into a product. You have to build it in.

Inspection-based quality works like this. Build the thing. Pass it to an inspector. If the inspector finds a defect, send it back. Fix it. Pass it to the inspector again. Every round trip costs time, and most of that time is wait time.

Built-in quality works differently. Design the process so defects cannot occur. Use statistical process control to detect drift before it becomes a defect. Give workers the authority and tools to stop the line when something goes wrong. The quality system is embedded in the work, not layered on top of it.

The analogy to AI governance is direct. Inspection-based governance: the agent writes code, a human reviews it, the human finds problems, sends it back. Built-in governance: the agent operates within constraints that prevent entire categories of error. Type systems. Automated test suites. CI pipelines that reject bad patterns before any human sees them. Architectural guardrails encoded in the tooling.

As we examined in the on-the-loop framework, CircleCI’s data shows this split in practice. The top 5% of engineering teams doubled their throughput. They did it by automating validation, not by adding reviewers. The other 95% added AI code generation on top of their existing review processes and saw throughput decline 7%.

”You Can’t Overcome Latency With Brute Force”

This line from Pennarun deserves to be a poster. Organizations keep trying to solve the review bottleneck by adding capacity: more reviewers, faster reviewers, AI-assisted reviewers. But the bottleneck is structural, not resource-constrained. Adding a faster reviewer to a three-layer process does not fix the scheduling dependencies between layers. It does not eliminate the queue time. It does not change the fact that three sequential approvals multiply wait times.

The same logic applies to AI agents. Making an agent write code faster does not help when the code sits in a review queue for a week. Making the reviewer faster does not help when the approved change waits two weeks for a deployment window. Pennarun’s point is that latency is a property of the system’s architecture, not a property of individual performance.

This has direct implications for organizations designing AI governance. If your governance model requires three sequential human approvals before an AI-generated change ships, the speed of the AI is irrelevant. Your delivery cadence is governed by the slowest approval chain, not by the fastest generator.

What Built-In Quality Looks Like for AI

If inspection-based governance creates the paradox, built-in governance resolves it. What does that look like in practice?

Constraint-based agent design. Instead of letting agents write anything and reviewing afterward, constrain what they can write. Scope their permissions. Limit the files they can modify. Define the architectural patterns they must follow. Prevention beats detection.

Automated validation at machine speed. Test suites, linting rules, type checking, security scanning. These run in seconds, not days. They eliminate entire categories of defect without human review. Every automated check you add is a human review step you can remove.

Risk-tiered oversight. Not all changes carry equal risk. A documentation update and a payment processing change should not pass through the same review chain. Route low-risk changes through automated verification only. Reserve human judgment for high-stakes decisions. As we noted in the governance renaming debate, the answer is not “kill review” but “direct review where it creates value.”

Continuous monitoring over batch approval. Instead of approving changes before deployment, monitor behavior after deployment. Canary releases. Feature flags. Automated rollback triggers. This shifts the governance model from “prevent all bad changes from shipping” to “detect and reverse bad changes quickly.” The former adds latency. The latter removes it.

The Two Failure Modes

Pennarun’s framework and our prior work define two failure modes that sit at opposite ends of the same spectrum.

Failure mode one: speed without governance. This is the 77-PR morning. Agents producing unchecked output. A 33% rejection rate. Compute and review time burned on work that should never have started. The symptom is waste.

Failure mode two: governance without speed. This is Pennarun’s 12-week bug fix. Three approval layers turning a 30-minute change into a quarterly project. Engineers spending 95% of their time waiting for permission to ship. The symptom is paralysis.

Most organizations oscillate between these poles. They start with too little governance, get burned, add layers. Then the layers calcify, delivery stalls, and someone proposes removing oversight to “move faster.” The cycle repeats.

The resolution is not a compromise between speed and governance. It is a different kind of governance. One where quality is built into the process rather than inspected after the fact. One where automated systems handle the verification that machines can do, and human judgment is reserved for the decisions that machines cannot make.

Why This Matters Now

The timing of Pennarun’s piece is not accidental. Every organization deploying AI agents faces this question in 2026. You have tools that produce output at unprecedented speed. Your existing review processes were designed for human-speed output. The mismatch creates pressure.

The wrong response is adding more review layers to compensate for AI speed. Each layer you add multiplies your delivery time by roughly 10x. Three layers and you have turned your AI investment into a very expensive way to maintain your existing delivery cadence.

The right response is redesigning your governance model around built-in quality. Encode your standards in automated checks. Push oversight upstream into constraints. Reserve human review for decisions that require human judgment. Monitor outcomes continuously rather than gating inputs sequentially.

This is an engineering project, not a policy decision. It requires investment in test infrastructure, CI pipeline reliability, monitoring systems, and architectural guardrails. The organizations that make this investment will capture the speed advantage of AI agents. The ones that layer traditional review processes on top of AI output will spend more money to deliver at the same pace.

Pennarun’s 10x multiplier is the quantified version of something Deming said decades ago: inspection is too late. The quality, good or bad, is already in the product. Build it right, or review it forever.

This analysis builds on Every Layer of Review Makes You 10X Slower (March 2026) by Avery Pennarun, CEO of Tailscale.

Victorino Group helps enterprises design AI governance that accelerates delivery instead of blocking it — quality built in, not inspected in. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation