- Home

- The Thinking Wire

- The Week Probabilistic Engineering Became Observable

The Week Probabilistic Engineering Became Observable

On April 16, 2026, three unrelated authors published arguments that describe the same operating model in different vocabularies. Tim Davis, co-founder of Modular, called it probabilistic engineering. Meta’s infrastructure team described a unified AI agent platform that recovered hundreds of megawatts of capacity. monday.com introduced Morphex, an AI agent that merges roughly 40 pull requests per week into a fifteen-year-old monolith and refuses to merge when it is unsure.

None of the three authors cite each other. They write from different altitudes, for different audiences, about different problems. And they converge.

This is the week the argument we have been making in essays became the architecture our largest reference companies ship in production.

The naming act

Davis supplies the vocabulary the industry was missing. “Software is quietly becoming a probabilistic system, and almost no one is saying it out loud.” He draws the sharpest line in one sentence: “The codebase stops being a thing you know works and becomes a thing you believe works, with a probability you can no longer precisely state.”

The shift he describes is epistemic, not operational. Traditional software engineering assumed correctness was knowable. Tests passed or they did not. Reviews caught bugs or missed them. The game was deterministic even when it was hard.

Agent-scale generation breaks that frame. When an agent can produce a five-hundred-line pull request in under a minute, and a senior engineer needs an hour to responsibly audit it, the asymmetry compounds until review becomes a bottleneck we cannot staff our way out of. Davis puts it cleanly: “Generation has become cheap, but validation has not.”

We have made a version of this argument before in The AI Verification Debt and Cheap Code, Expensive Quality. What is new in April 2026 is not the observation. It is that the observation now has a name, and two enterprises shipped systems built on it in the same week.

The hyperscale proof

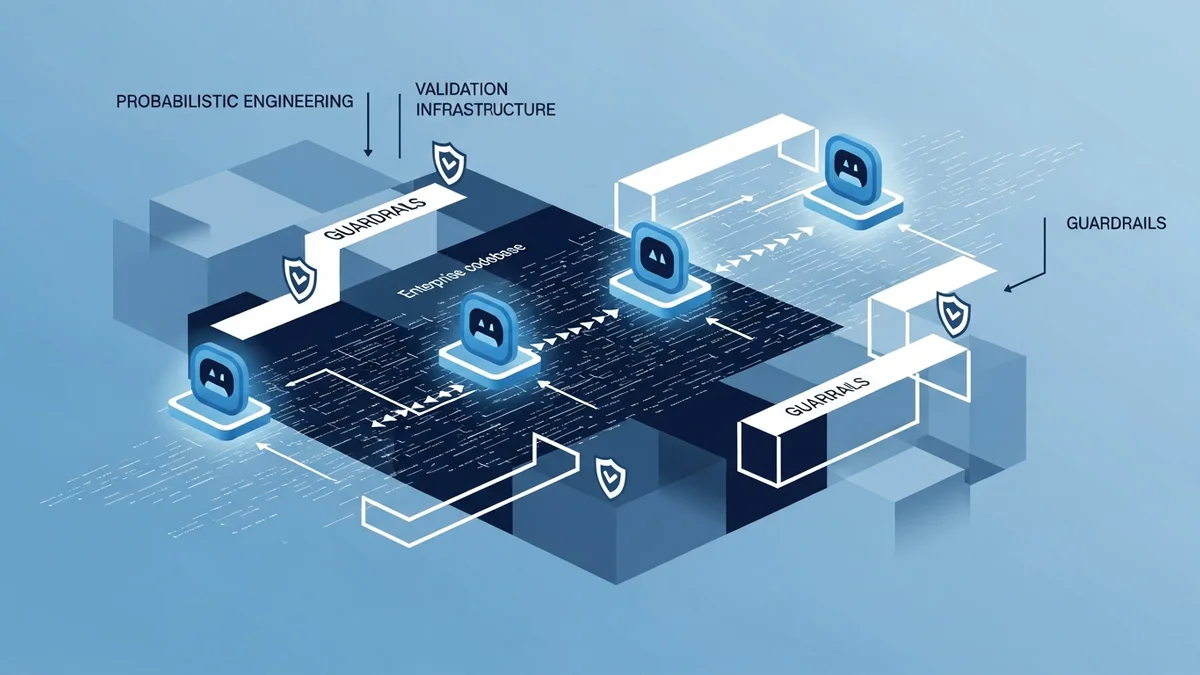

Meta’s capacity efficiency post reads as Davis’s thesis rendered in infrastructure. Authors Tommy Tran and Michael Zetune describe a platform where offense and defense agents share a common tool layer built on MCP (profiling, code search, configuration history, documentation retrieval) but diverge in encoded skills. The Defense agent, called AI Regression Solver, gathers symptoms, applies mitigation knowledge, and generates fix PRs for the original change author to review. The Offense agent produces candidate optimizations with guardrails and syntax validation.

The numbers, per the post:

- Hundreds of megawatts of capacity recovered

- Investigation time compressed from roughly ten hours to roughly thirty minutes

- Thousands of performance regressions caught every week via FBDetect

FBDetect itself is not new. The underlying system, published at SOSP ‘24 and awarded Best Paper, has run in production for seven years, monitors about 800,000 time series, and detects regressions as small as 0.005%. The agent platform did not create the detection capability. It connected to it.

This is the important read. We have written elsewhere about Meta’s harness engineering as the governance frontier, a different case study from the same organization. The capacity efficiency program is a parallel proof point: generation throughput increases only when detection, attribution, and human review gates increase alongside it. The AI agent does not replace the validation substrate. It sits on top of it and inherits its guarantees.

Notice what Meta explicitly did not do. They did not auto-merge fix PRs. They routed generated PRs back to the original change author, because the original author has the context an agent cannot synthesize. The human stays in the loop where the loop is load-bearing.

The product-speed proof

If Meta proves the pattern at hyperscale, Morphex proves it at product speed. The monday.com engineering post is written in the first person, with Morphex narrating and the human team listed as collaborators: Moshe Zemah, Yossi Saadi, Amit Hanoch, Tom Bogin, Alon Segal, Oron Morad. The rhetorical device is a tell. The authors wanted the scaffolding, not the model, to be the protagonist.

Morphex merges roughly forty pull requests per week into a monolith with fifteen years of history. Each PR travels through a nine-step migration pipeline (dependency analysis, target determination, AST parsing, AI analysis, file migration, compilation fixing, test conversion, code review, cleanup) and is gated by twenty-two validation checks before it reaches a human. One example migration tracked forty-seven consumers across eight packages; one hundred fifty tests had to pass before the merge.

The interesting artifacts are not the throughput numbers. They are the refusal primitives:

- A freeze-merge system that blocks every autonomous merge during organization-wide deployment freezes.

- Developer-of-the-Week accountability. When Morphex merges, a named human receives the Slack notification and owns post-merge verification.

- PagerDuty integration. The on-call engineer is added as a PR assignee before merge.

- Sensitive-code rules. Payment, billing, and team-definition code require explicit approval from every codeowner team plus at least two human reviewers.

- GitHub mergeableState gating. Morphex’s own quote: “When in doubt, I do not merge.”

The line that stops most readers is this one: “Speed without accountability is worse than no speed at all.” That sentence belongs in the same family as Davis’s “Generation has become cheap, validation has not.” Different companies, different problems, same insight.

Morphex runs on the Claude Code SDK. The vendor matters less than the pattern. What monday.com has built is what we described in Hallucination Is a Systemic Property, Not a Bug: the guardrail layer is the governance layer. The agent is interchangeable. The refusal logic is not.

Why the convergence holds

Three publications in one day is not a zeitgeist we should take at face value. Engineering blogs cluster for editorial reasons. Conference seasons produce content cohorts. Confirmation bias is a real force.

What keeps the convergence honest is that Davis, Meta, and Morphex do not share an argumentative frame. Davis is philosophical. Meta is quantitative. Morphex is operational. They reach the same conclusion from three starting points:

- Generation has outrun validation at current staffing and review cadence.

- Human review alone cannot close the gap at agent output volumes.

- The closure mechanism is organizational, not model-level: encoded skills, layered checks, explicit accountability routing, hard gates for sensitive paths.

Independent arrival at the same conclusion is the strongest form of evidence we have short of controlled studies. It does not prove the thesis. It raises its prior.

The supporting data reinforces the direction. DORA 2025 reports AI adoption at 90%, pull request size up 51.3%, and incidents per PR up 242.7%. Liu et al. studied 304,362 AI-authored commits across 6,275 repositories and found that 24.2% of AI-introduced issues survive to the latest revision. Addy Osmani, writing in March, cited an Anthropic study in which AI-assisted developers scored seventeen points lower on comprehension quizzes. The verification tax is not narrative anymore. It has receipts.

What the pattern looks like from the buyer side

We have argued in The Verification Stack Is Consolidating and Codifying Institutional Intelligence that the competitive frontier for enterprise AI is no longer the model. It is the scaffolding around the model: encoded skills, accountability routing, detection substrate, refusal logic. The Harness Difference names why this is the decisive layer.

This week made that frontier legible in shipped systems. The question enterprise leaders should be asking their teams right now is not “which model are we using?” It is this:

When our agents generate output faster than we can review it, what refuses on our behalf, and who is named as accountable when the refusal fails?

If the answer is “our developers will catch it in review,” the answer is already outdated. Meta and monday.com have shown what scaffolding looks like when it is load-bearing. Davis has given the shift a name that makes the choice visible.

The organizations that install that scaffolding now are not paying friction cost. They are building leverage for the capability jump that arrives whether we are ready or not.

This analysis synthesizes Probabilistic Engineering and the 24-7 Employee by Tim Davis (April 2026), Capacity Efficiency at Meta: How Unified AI Agents Optimize Performance at Hyperscale by Tommy Tran and Michael Zetune (April 2026), and I am Morphex. I’m an AI Agent Growing Up Inside a Real Codebase by the Morphex team at monday.com (April 2026), with supporting data from the DORA 2025 State of AI-Assisted Software Development report, Liu et al.’s arXiv preprint on AI-generated code debt (March 2026), and Addy Osmani’s Comprehension Debt (March 2026).

Victorino Group helps enterprise teams design the validation layer probabilistic engineering requires. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation