- Home

- The Thinking Wire

- Orchestration Is the New Governance Layer

A single number hides the whole argument.

On a Slack clone built end-to-end by Factory.ai’s new Missions system — six milestones, 16.5 hours of wall time, 38,800 lines of code, 52.5% test coverage — 34.4% of the final implementation came from fixes surfaced by validator agents. Eighty-one issues became twenty-one fix features. A third of the product existed because a different agent, with a different job, was allowed to disagree with the one that wrote the code.

That is not a productivity statistic. It is a governance statistic.

Four independent pieces published this month — Factory’s Missions architecture, Anthropic’s coordination patterns doc, the leaked Claude Code “Coordinator Mode,” and the “Thin Harness, Fat Skills” essay — converge on a shift that most teams have not yet named: governance is moving from prompt engineering to architecture. The orchestrator-subagent pattern is becoming the unit of AI governance because it structurally enforces the one rule every other layer has been trying to enforce with discipline and checklists: no agent evaluates its own work.

The Context Decay Problem

Theo Luan’s write-up of how Missions work opens with a sentence that should hang on every AI team’s wall:

When the context window accumulates information irrelevant to — or actively working against — the current goal, performance suffers.

Every practitioner who has watched a long session rot into confusion recognizes the shape of this failure. The model is not dumb. The context is poisoned. Old tool errors, abandoned plans, stale file contents and retrospective rationalizations all share the same window, and the model cannot tell which of them still matters. Over enough turns, the most recent tokens crowd out the original goal, and the agent starts optimizing for the argument it is currently having rather than the job it was given.

For a long time the industry treated this as a prompt engineering problem. Better system prompts. Longer context. Smarter compaction. Memory. Retrieval.

The April 2026 signals are saying something quieter and more structural: the problem is not the window. The problem is that one agent is doing too many jobs inside one window. You cannot write a prompt that makes a single agent a rigorous planner, a patient worker, and a skeptical reviewer at the same time, because those roles have incompatible incentives. The planner wants optionality. The worker wants to finish. The reviewer wants to find what the worker missed. Put them in one head and the worker wins, because the worker is the one with the cursor.

Separation of concerns stops being a style preference and becomes the only way to keep context decay from eating the outcome.

Factory’s Numbers, Read as Governance

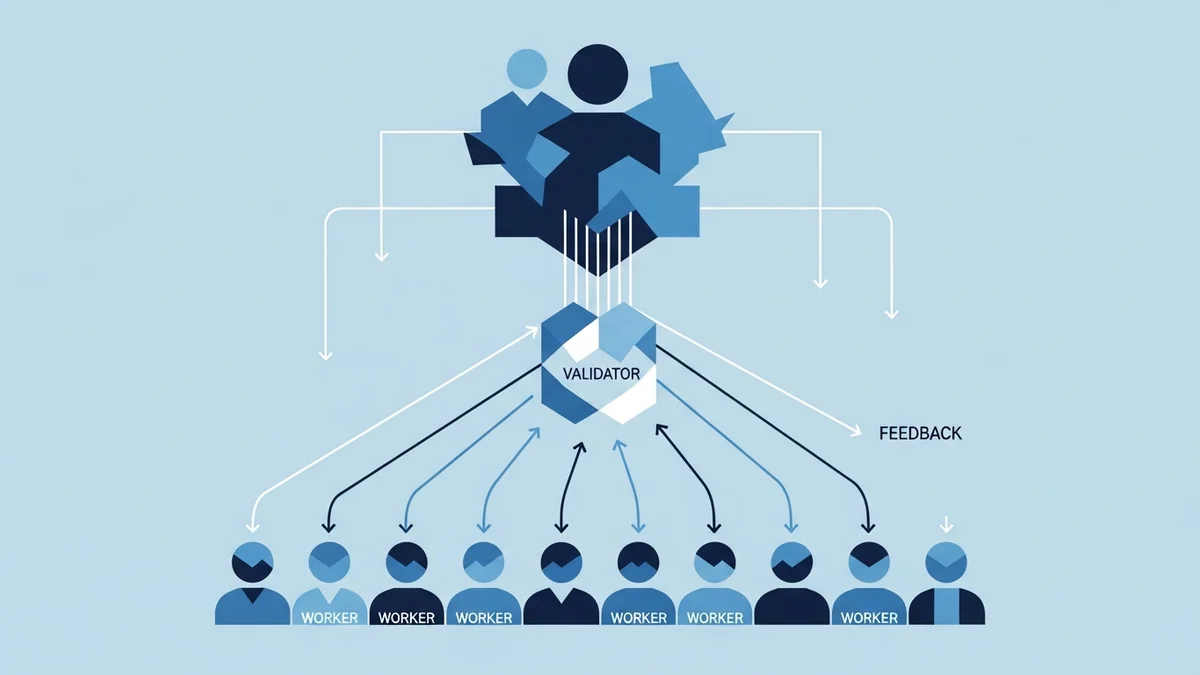

Missions, as Factory describes it, is an orchestrator that decomposes a goal into milestones and delegates to two kinds of subagents: workers that execute implementation tasks, and validators that independently evaluate the result. The orchestrator holds the mission plan and the shared state. The subagents hold only what they need for their slice.

Against the Slack clone benchmark, the shape of the run is what matters:

- 90 agent runs total: 1 orchestrator, 63 workers, 27 validators.

- 778.5M total tokens consumed, of which 744.9M were cached reads — nearly 96%. Shared state is what makes fanning out economically possible.

- 81 issues surfaced by validators, consolidated into 21 fix features, accounting for that 34.4% of final implementation.

Read those numbers as an org chart and they describe something familiar: a small planning function, a larger execution function, and a dedicated review function whose only job is to disagree. No worker is asked to grade its own commit. No validator is asked to ship.

The token economics matter more than they first appear. The reason 96% of tokens are cached is that the orchestrator is not re-explaining the mission to each subagent from scratch; it is passing a shared, stable context that every worker and validator reads without paying for it again. Shared state plus role separation is what makes this cheap. Without the shared state, the fan-out costs explode. Without the role separation, the fan-out does not buy you anything a single longer session could not already do.

The governance reading: Factory did not build a faster coder. It built a structure in which a third of the product gets written because someone was structurally empowered to reject the first draft.

Anthropic Names the Patterns

A week later, Anthropic published a “Multi-Agent Coordination Patterns” piece that names five architectures engineering teams are now choosing between:

- Generator-Verifier — one agent proposes, a second checks.

- Orchestrator-Subagent — a lead decomposes and delegates to specialized subagents.

- Agent Teams — multiple peers collaborate on a shared goal.

- Message Bus — agents communicate through a shared, ordered channel.

- Shared State — agents read and write against a common workspace.

The piece’s recommended starting point is Orchestrator-Subagent. Not because it is the most sophisticated — it is not — but because it is the pattern that forces the separation most teams are missing. One agent plans. Others execute. A distinct path handles verification. The orchestrator keeps the goal; the subagents keep their slice; nobody sees a window big enough to confuse the two.

Reading the five patterns side by side, the governance logic becomes visible. Generator-Verifier is the minimum viable version of “no agent evaluates its own work.” Orchestrator-Subagent is that principle plus context discipline. Agent Teams, Message Bus, and Shared State are what you reach for when the coordination surface outgrows a single supervisor. But all five share the same architectural commitment: the unit of trust is no longer the agent. It is the pattern.

This is the shift the “Thin Harness, Fat Skills” essay makes explicit from the other direction. If the harness stays thin — the loop, the tool interface, the scheduler — and the skills get fat — the role-specific capabilities, guardrails, and evaluation logic — then governance lives in how skills are composed, not in how the harness is tuned. The harness coordinates. The skills specialize. The separation is the point.

The IDE Absorbs It

The fourth signal is the most consumer-visible. Testing Catalog reported in early April that Anthropic is testing a Coordinator Mode for Claude Code, a structured visual interface for directing parallel sub-agents. The framing in the report is competitive — “to rival Codex superapp” — but the architectural meaning is bigger than that.

Coordinator Mode takes a pattern that used to require homegrown orchestration glue and makes it a surface in the tool itself. Developers get a view into parallel subtasks. They can see which branch is planning, which is implementing, which is reviewing. The orchestrator stops being an implementation detail and starts being a first-class UI object. That is how patterns move from “thing experts build” to “thing everyone uses”: the IDE absorbs them.

Combined with VS Code’s earlier move toward parallel subagent contexts, the direction is clear. The editor is no longer a text surface with AI features bolted on. It is becoming a coordination surface, and coordination surfaces are where governance lives.

The One-Sentence Pitch

We have written before about harnesses as the structural layer of agent work, about multi-agent architecture as a rediscovery of Erlang-era isolation, about the difference a good harness makes, and about agent teams as a new operating model. Those pieces framed the what and the how. This week’s signals let us frame the why in one sentence:

None of them evaluates its own work.

That is the governance pitch for the orchestrator-subagent pattern, and it is what separates it from every “let’s chain some prompts” experiment that preceded it. The planner does not grade the plan. The worker does not grade the commit. The validator does not ship. Each role is given just enough context to do its job and just enough authority to reject the previous step. The orchestrator holds the goal. Context decay loses its favorite attack surface — the single overloaded window — because there is no single overloaded window left.

The practical consequence for teams building with agents right now is that pattern selection is no longer a style choice. It is a governance decision. Choosing Generator-Verifier over a single bigger prompt is a governance decision. Choosing Orchestrator-Subagent over Generator-Verifier is a governance decision. Adopting shared state, or not, is a governance decision. Where the separation lines fall determines what the system is structurally incapable of getting wrong, which is the only kind of guarantee worth having in a non-deterministic stack.

Thirty-four percent of a product written by agents that were allowed to disagree with each other is not a story about clever orchestration. It is the new baseline. Teams still running one agent, one window, one context, and calling it “an AI developer” are not behind on tooling. They are behind on governance.

The four April signals — Factory’s Missions numbers, Anthropic’s patterns doc, Coordinator Mode in Claude Code, and the thin-harness/fat-skills framing — do not describe a new capability. They describe a new shape for the same capability, and that shape is the one Erlang engineers, telecom operators, and anyone who has ever run a code review already understood: separate the jobs, limit the context, and never let the worker grade itself. The governance layer is the architecture. The architecture is the orchestrator-subagent pattern. And the pattern is no longer optional.

Sources: Theo Luan, “How Missions Work,” Factory.ai, April 2026 (https://factory.ai/news/missions-architecture); Anthropic, “Multi-Agent Coordination Patterns,” April 2026 (https://claude.com/blog/multi-agent-coordination-patterns); Testing Catalog, “Anthropic Tests Claude Code Upgrade to Rival Codex Superapp,” April 2026 (https://www.testingcatalog.com/anthropic-tests-claude-code-upgrade-to-rival-codex-superapp/); “Thin Harness, Fat Skills,” TLDR Main, April 2026.

Victorino Group helps teams design agent architectures where governance is structural, not bolted on. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation