- Home

- The Thinking Wire

- The Intent Layer: Why the Best AI Governance Is the Kind Nobody Notices

The Intent Layer: Why the Best AI Governance Is the Kind Nobody Notices

A developer types: “I need a staging environment with a Postgres database and Redis cache.” No Terraform. No YAML. No pull request against an infrastructure repo. The platform provisions exactly what was requested, applies the organization’s security policies, tags the resources for cost allocation, and produces a full audit trail.

The developer never saw the governance. That is the point.

Spacelift Intent, released in early 2026, lets developers provision infrastructure in natural language while platform teams enforce policies behind the scenes. It is a useful product. It is also an instance of something larger: an architectural pattern where governance becomes invisible to the people it governs.

This pattern is showing up everywhere. And it changes what “governance” means in practice.

The Pattern: Governance as Medium, Not Message

Three independent systems, built by three different organizations for three different problems, converge on the same design.

Spacelift Intent translates natural language into infrastructure. Platform teams define policies, cost constraints, and security requirements. Developers describe what they need. The system reconciles the two. As Logan Stuart, Director of Engineering at Cityblock Health, put it: “Most software engineers know a lot about programming languages and very little about the cloud.” Intent closes that knowledge distance without requiring developers to learn cloud architecture. The governance happens in the translation layer.

Google Gemini API Skills enforce specification compliance during code generation. A skill is a structured set of rules (coding standards, API conventions, architectural constraints) that the model follows during generation. Google reported a 96.3% pass rate against specifications. The developer asks for code. The skill enforces the organization’s standards. The developer never writes a linting rule or reads a style guide. Governance is baked into the generation step.

Claude Code harnesses (CLAUDE.md files) inject organizational constraints at the prompt level. A harness can enforce git workflow rules, testing requirements, file structure conventions, and security boundaries. The developer interacts with an AI coding assistant. The harness ensures every interaction respects organizational policy. No separate compliance step. No manual review for basic rule adherence. The governance lives in the configuration.

Same pattern. Three implementations. The governed party (developer, user, operator) never interacts with the governance directly. They interact with a tool that has governance embedded in its operation.

Why Invisible Governance Works Better

Visible governance creates friction. Friction creates workarounds. Workarounds create shadow systems. Shadow systems create the exact risks governance was supposed to prevent.

This is not theoretical. Every organization with a centralized infrastructure team has watched developers spin up untagged cloud resources because the official provisioning process took three days. Every organization with a security review board has watched teams ship features without review because the review cycle did not match the release cycle.

The failure mode of visible governance is predictable: compliance teams enforce rules, delivery teams route around them, and the organization ends up with two systems. The official one (slow, compliant, used for audits) and the real one (fast, ungoverned, used for production).

Invisible governance eliminates this failure mode by removing the choice. When governance is the medium through which work happens, there is no path that bypasses it. A developer using Spacelift Intent cannot provision non-compliant infrastructure because the translation layer enforces compliance. A developer using a properly configured Claude Code harness cannot skip testing because the harness requires it before any commit.

Joe Hutchinson, Platform Lead at Vega, described Intent as “best suited for experimentation.” That observation matters. Experimentation is precisely where governance breaks down in traditional models. Developers experiment in sandboxes with no policy enforcement, then promote that code to production. The governance-free zone becomes the source of production incidents.

When experimentation itself is governed invisibly, the entire lifecycle is covered. Not because someone wrote a policy about sandbox governance. Because the tool does not have an ungoverned mode.

The Spec Layer Is the Governance Layer

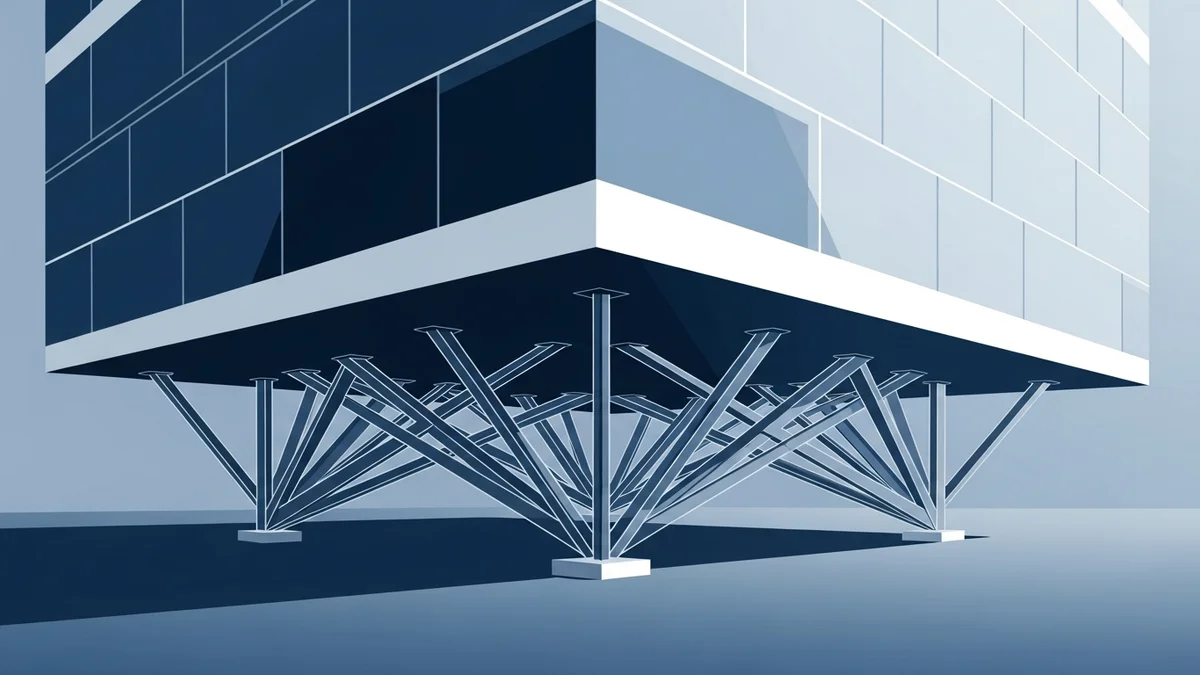

What Spacelift, Google, and Anthropic have built are different implementations of the same abstraction: a spec layer that sits between human intent and system execution.

The spec layer translates. It takes what the human wants (stated in natural language, in a skill definition, in a harness file) and produces what the system needs (Terraform plans, compliant code, governed AI interactions). The governance lives in the translation rules, not in a separate enforcement mechanism.

As we explored in Configuration-Dependent Safety, AI system behavior varies dramatically based on configuration. A model that behaves safely in one configuration can behave unsafely in another. The spec layer is the answer to that finding. Instead of testing every possible configuration (impossible), you make the spec layer the only path to configuration. The surface area shrinks from infinite to manageable.

This is also the natural extension of what we documented in Agent Specs Are Governance Artifacts. That essay argued specs belong in compliance infrastructure alongside IAM policies. The intent layer takes the argument further: specs should not just govern agents. They should be the interface through which all system interaction occurs.

The Audit Trail Problem Solves Itself

Traditional governance creates audit trails through logging, monitoring, and periodic review. Someone provisions infrastructure. A separate system logs the event. A different team reviews the logs quarterly.

In an intent-layer architecture, the audit trail is a byproduct of normal operation. Every Spacelift Intent request is a natural language statement that produced a specific infrastructure outcome. The request, the translation, the policy evaluation, and the result are all captured as a single event chain. You do not need a separate audit system because the provisioning system is the audit system.

The same holds for harness engineering. Every CLAUDE.md file is version-controlled. Every interaction governed by that harness produces output shaped by its constraints. The diff between harness versions shows exactly what governance changed and when. Git history becomes the compliance audit trail.

This matters for regulated industries. SOC 2, ISO 27001, and sector-specific frameworks all require evidence of control execution. An intent-layer architecture produces that evidence as a natural consequence of operation, not as a separate compliance activity. The cost of audit readiness drops toward zero.

Where This Pattern Breaks

Invisible governance has a failure mode worth naming: invisible failures.

When governance is visible, its absence is also visible. A developer who skips a security review knows they skipped it. A manager who asks about compliance gets a clear answer: the review happened or it did not.

When governance is invisible, its absence is invisible too. If the Spacelift Intent policy engine has a misconfiguration, developers continue provisioning infrastructure that feels governed but is not. If a CLAUDE.md harness has a missing constraint, the AI assistant continues producing output that looks governed but lacks a specific control.

The mitigation is testing the governance layer itself. Policy-as-code testing. Harness validation. Spec coverage analysis. These are new disciplines that most organizations have not built yet. The organizations adopting invisible governance without investing in governance testing are trading one risk (developers routing around visible governance) for another (silent governance failures).

The second limitation is scope. Invisible governance works for repeatable, well-understood processes. Infrastructure provisioning. Code generation. Standard deployments. It works less well for novel situations where the governance rules have not been written yet because no one anticipated the scenario.

A platform team can encode policies for standard infrastructure patterns. They cannot encode policies for infrastructure patterns that do not exist yet. The intent layer handles the 90% case well. The remaining 10% still needs human judgment, visible process, and explicit review.

The Organizational Shift

Adopting invisible governance changes who does what.

Platform teams become policy authors, not ticket processors. Instead of reviewing infrastructure requests, they write the rules that evaluate infrastructure requests. Their output shifts from approvals to specifications. This is higher-leverage work, but it requires a different skill set. Writing a Terraform module is different from writing a policy that evaluates arbitrary Terraform plans.

Developers become consumers of governed services, not compliance participants. They stop thinking about governance entirely. This is the goal, and it carries a risk: developers who never think about governance lose the ability to reason about it. When they encounter the 10% of cases that require explicit governance reasoning, they may lack the judgment to recognize it.

Security and compliance teams become spec reviewers, not process enforcers. Their job shifts from checking whether governance happened to verifying that the governance layer is correctly specified. Auditing a policy engine is different from auditing individual decisions. It is more efficient but requires deeper technical understanding.

What This Means for Your Organization

If you are building or buying AI-assisted developer tools, ask one question: does the tool embed governance, or does it require governance to be applied separately?

Tools that embed governance will be adopted. Tools that require separate governance steps will be worked around. This is not a prediction about human nature. It is an observation about what has already happened, repeatedly, across every organization that has tried to bolt governance onto fast-moving engineering workflows.

The Spacelift team summarized it well: “AI is most effective when it helps translate intent into action while respecting the rules platform teams already rely on.” Replace “AI” with “tooling” and “platform teams” with “governance teams” and you have the general principle.

Governance that enables is adopted. Governance that blocks is circumvented. The intent layer is the architectural pattern that makes enabling governance possible.

Build the spec layer. Embed the rules. Make governance invisible.

Then test the spec layer ruthlessly, because invisible governance that fails invisibly is worse than no governance at all.

This analysis synthesizes Spacelift Intent learnings from Spacelift (March 2026), Gemini API Skills from Google (April 2026), and Anthropic’s harness engineering principles (2026).

Victorino Group designs governance that enables velocity rather than blocking it. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation