- Home

- The Thinking Wire

- The Determinism Divide: Why Executives and ICs Need Different AI Governance

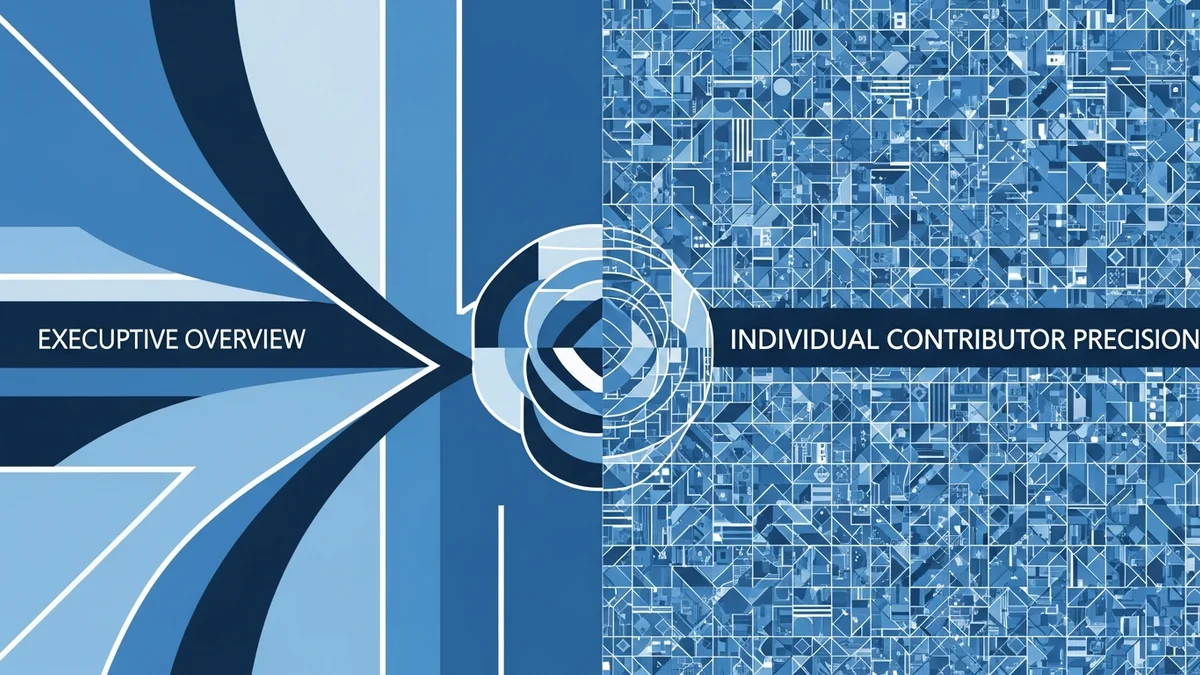

The Determinism Divide: Why Executives and ICs Need Different AI Governance

Software engineer John Wang recently asked a question that most AI governance conversations skip entirely: why do executives love AI while individual contributors resist it?

The typical answers are unsatisfying. “Executives are optimists.” “ICs fear job loss.” “Leadership is out of touch.” These explanations feel true without being useful. They describe symptoms. Wang identifies the structural cause.

Executives and ICs operate in fundamentally different determinism paradigms. That difference explains the adoption friction, the governance failures, and the path forward.

Executives Already Manage Non-Deterministic Systems

A manager’s core job is coordinating humans. Humans are unreliable, inconsistent, emotional, and capable of surprising brilliance. Every executive who has managed a team of ten or more people has learned to work with probabilistic outcomes. You give clear direction. You hope for 70% alignment. You correct course constantly.

Wang puts it precisely: “A manager’s job is to create a model of the world and align everyone’s utility functions.” That model is never complete. The alignment is never perfect. The job is to operate productively in that uncertainty.

AI fits naturally into this worldview. An LLM that produces good output 80% of the time is more predictable than a new hire in their first quarter. An AI that occasionally hallucinates is less disruptive than a team member who silently misunderstands the brief and delivers something wrong two weeks later. For executives, AI has more predictable failure modes than the systems they already manage.

This is why mandates from the top feel obvious to the people issuing them. From the executive’s perspective, AI is a higher-reliability version of what they already coordinate.

ICs Operate in a Different Universe

Individual contributors, particularly in technical roles, are evaluated on precision. A software engineer’s code either compiles or it doesn’t. A financial analyst’s model either reconciles or it doesn’t. A designer’s layout either meets the spec or it doesn’t.

In this context, AI’s error rate is not a tolerable imprecision. It is a professional liability. Wang captures the core tension: “The overhead of fixing [AI’s] mistakes can genuinely cost more than doing it yourself.”

This is not resistance to change. It is rational cost-benefit analysis by people whose careers depend on getting details right. When your performance review measures the correctness of your output, a tool that is correct 85% of the time does not save you 85% of the work. It creates a verification burden on top of the original work.

There is also an identity dimension that governance frameworks rarely acknowledge. Mastery is central to how ICs define professional worth. A senior engineer’s value comes from decades of accumulated judgment about edge cases, architecture decisions, and tradeoffs that are invisible to anyone who hasn’t built similar systems. AI that produces “good enough” output threatens the economic value of that mastery. The fear is not about unemployment. It is about the devaluation of craft.

One Framework Cannot Serve Both Mental Models

We’ve documented the distance between individual and institutional AI productivity. Wang’s analysis explains why that distance exists: executives and ICs operate in fundamentally different determinism paradigms. When mandates meet mental models, friction is predictable because the mandate assumes a single model of what “productive AI use” looks like.

It doesn’t. Productive AI use for an executive means portfolio-level improvements across a team. Productive AI use for an IC means output that meets their professional precision standard. These are different goals requiring different governance structures.

Precision-oriented governance for ICs should include:

- Explicit error budgets per use case. “This task tolerates 5% error rate; that one tolerates zero.” Not every workflow gets the same AI treatment.

- Verification gates that match task criticality. Drafting a status update and writing production code require different review processes. Governance should reflect that.

- Clear quality thresholds defined before AI is introduced, not after. The IC needs to know: what does “good enough” mean for this specific task?

- Opt-in by domain. Let the people closest to the work identify where AI adds value and where it adds overhead. Blanket mandates ignore the expertise gradient.

Outcome-oriented governance for executives should include:

- Portfolio risk budgets. Not every AI initiative needs to succeed. Allocate a failure budget across experiments and measure aggregate return.

- Experimentation windows with defined evaluation criteria. “We will try this for 90 days and measure these three outcomes.” Not open-ended mandates.

- Aggregate metrics that capture coordination effects, not just individual output velocity. Faster code production means nothing if integration and review become the bottleneck.

- Feedback loops that surface IC-level friction to executive-level decisions. The people using the tools daily have information the people mandating them do not.

The Governance Failure Mode

Most organizations today apply executive-style governance to IC-level work. They mandate adoption, measure usage, and track output volume. This works for the executive mental model. It fails for the IC mental model.

The result is what Wang describes: ICs comply without committing. They use the tools to satisfy the metric. They quietly verify and correct the output. They spend more time, not less. The organization reports high AI adoption rates while the people doing the actual work experience AI as overhead.

The inverse failure is rarer but equally destructive: organizations that defer entirely to IC resistance and never push AI into workflows where it would generate real value. Fear of imprecision becomes an excuse for inaction.

Good governance holds both realities simultaneously. Executives are right that AI improves aggregate outcomes. ICs are right that AI introduces precision costs. The framework must serve both truths.

What This Means in Practice

The determinism divide is not a problem to solve. It is a structural reality to design around. Organizations that build AI governance for one mental model will alienate the other. Organizations that pretend the divide doesn’t exist will build governance that works on paper and fails in practice.

Start by acknowledging that “AI adoption” means different things at different levels of the organization. Then build governance that is specific enough to address each level’s actual concerns. Generic AI policies are a sign that the organization hasn’t done the hard work of understanding how AI interacts with different types of work.

The organizations that get this right will not be the ones with the highest AI usage rates. They will be the ones where both executives and ICs believe the governance framework serves their actual needs.

This analysis builds on John Wang’s Why Are Executives Enamored With AI But ICs Aren’t? (March 2026), extending the deterministic/non-deterministic framework into concrete governance recommendations.

Victorino Group helps organizations design AI governance that works for every level of the org chart. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation