- Home

- The Thinking Wire

- Design-First Is the Cheapest AI Governance You Can Buy

Design-First Is the Cheapest AI Governance You Can Buy

Ask an AI coding assistant to build a notification service. Within seconds you have working code. A queue library has been selected. Error handling patterns are embedded. Retry logic is implemented. The data model is fixed.

None of these decisions were discussed. None were approved. All of them will cost real money to change later.

Rahul Garg, a principal engineer at ThoughtWorks, published a pattern on Martin Fowler’s site in March 2026 that names this problem precisely: the Implementation Trap. When a developer asks an AI assistant for code, the AI collapses the entire think-design-code pipeline into a single step. Design decisions that used to happen on whiteboards, in architecture reviews, in team discussions, now happen silently inside a model’s inference pass.

The human discovers those decisions after the fact, buried in the output.

The Cost Illusion

The speed is real. Code appears in seconds. But speed creates a dangerous illusion: because the code was cheap to produce, people assume it will be cheap to change.

Boehm’s cost-of-change research (originally from the 1970s, and debated in modern iterative contexts) estimated that fixing a requirements-level mistake in production costs up to 100x what it would cost to catch during design. The exact multiplier is less important than the principle. AI compresses the production phase but does not compress the correction phase. A component boundary decided by the model in 200 milliseconds carries the same downstream correction cost as one decided by a team over two days.

The data supports the concern. Ox Security analyzed 300 enterprise projects in 2025 and found AI-generated code anti-patterns present in 80 to 100 percent of them. Gartner projects that 75% of technology leaders will face moderate to severe technical debt from AI-accelerated coding by 2026. The code is arriving faster. The embedded design mistakes are arriving at the same rate.

Five Levels Before Code

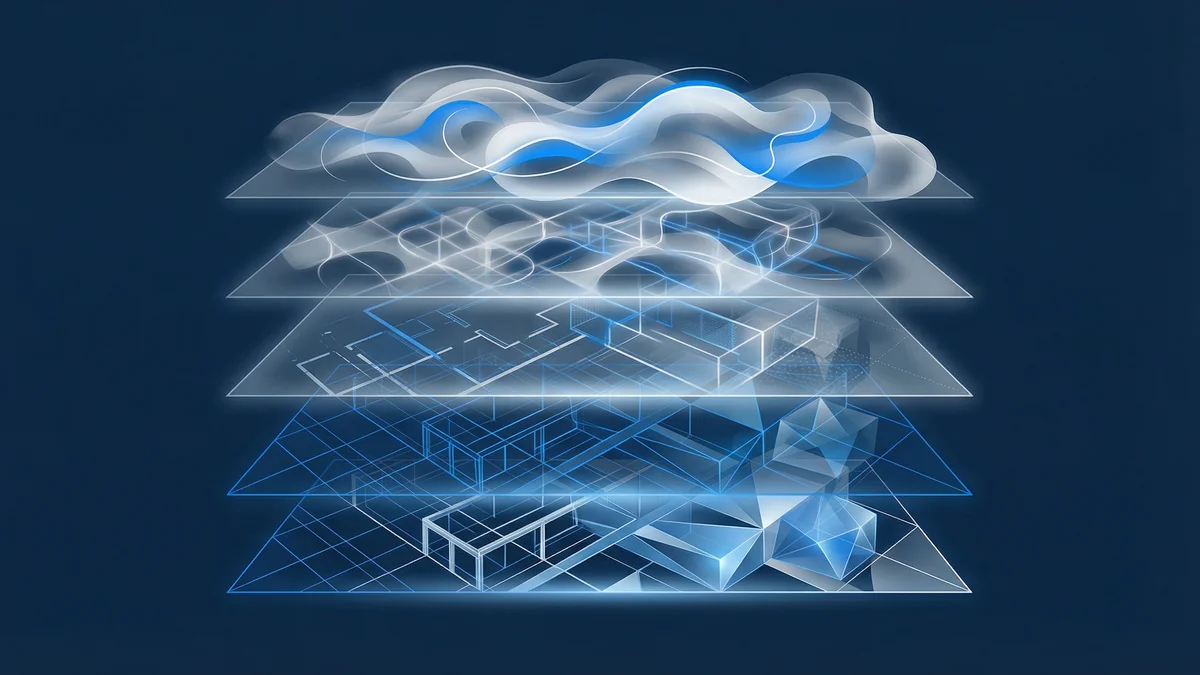

Garg’s proposed solution is a five-level framework that mirrors how experienced engineers whiteboard a problem before writing anything:

Level 1: Capabilities. What should the system do? Not how. Functional boundaries only.

Level 2: Components. What are the major pieces? How are responsibilities divided?

Level 3: Interactions. How do the components talk to each other? What flows where?

Level 4: Contracts. What are the interfaces? What does each component promise to accept and return?

Level 5: Implementation. Now write the code.

The constraint is explicit: no code until Level 5 is approved. Each level is a gate where the human retains decision authority. The AI proposes. The human decides. Then the next level begins.

This is not a development methodology. It is a governance protocol for AI-generated design decisions. Each level forces a conversation that the AI would otherwise skip. Component boundaries get discussed before they get encoded. Data flow gets designed before it gets implemented. Error handling gets specified before a library gets chosen.

The Comprehension Argument

As we explored in Cognitive Debt: The Invisible Cost of AI-Generated Code, developers who delegate code to AI lose understanding of their own systems. Addy Osmani’s research sharpened this point: developers who used AI primarily for code delegation scored below 40% on system comprehension tests. Those who used AI for conceptual inquiry scored 65%.

Design-first collaboration is one mechanism for staying on the right side of that divide. By working through capabilities, components, interactions, and contracts before any code exists, the developer builds a mental model of the system. The AI becomes a thinking partner in design, not a replacement for it.

The comprehension happens during the design conversation, not during the code review. By the time Level 5 produces implementation, the developer already understands why the code is structured the way it is. They made those decisions.

Where the Framework Stops

Garg’s pattern is built for a specific interaction model: one human, one AI, a chat window. The human prompts. The AI responds. The human evaluates and guides.

This is increasingly not how AI-assisted development works.

As we examined in From In-the-Loop to On-the-Loop, the industry is moving toward agentic architectures where multiple AI agents handle different phases of the development pipeline. A planner agent decomposes the problem. An architect agent designs the solution. An implementer agent writes the code. A tester agent validates it. A reviewer agent checks quality.

In this model, design-first thinking is not something a human enforces through a chat conversation. It is something the system embeds in its own pipeline. The planner-architect-implementer sequence is a structural implementation of “no code until the design is approved,” except the approval happens between agents, not between a human and a single assistant.

The five-level framework is valuable for today’s pair-programming mode. But agentic pipelines need the same principle encoded differently: as architectural constraints on the pipeline itself, not as a prompting discipline for individual sessions.

The Prompt Template Is the Real Contribution

Here is what practitioners will actually use from Garg’s article. Not the five-level framework as a formal process, but the prompt template that embeds the constraint in a single instruction:

“Do not generate code until I approve the design at each level. Start with Level 1: Capabilities.”

This single sentence changes the AI’s behavior from “produce code immediately” to “produce design artifacts for review.” It achieves most of the framework’s governance value with minimal process overhead.

For teams that cannot adopt a formal five-level process (which is most teams, most of the time), this prompt constraint is the practical takeaway. It is design governance reduced to its minimum viable form. Not a methodology. A sentence.

Three Patterns, One Stack

Garg’s design-first pattern is part of a larger series on Fowler’s site: “Patterns for Reducing Friction in AI-Assisted Development.” Two companion patterns complete the picture.

Knowledge Priming establishes what the AI should know before any work begins. Project conventions, architectural decisions, technology constraints. This is static context that prevents the AI from making decisions that violate existing standards.

Context Anchoring creates persistent records of what was decided during the session. Design choices, rejected alternatives, reasoning. This prevents context loss across sessions and creates an audit trail.

Together, the three patterns form a governance stack: Knowledge Priming (what the AI should know) + Design-First (how decisions get made) + Context Anchoring (what was decided and why).

As we argued in When the Spec Is the Product, Who Governs the Spec?, spec-driven development demands governance infrastructure around the spec itself. Garg’s three-pattern stack is one concrete implementation of that infrastructure for pair-programming workflows. The spec is the five-level design artifact. The governance is the gate between each level.

The Limitation Nobody Mentions

The article’s evidence base is thin. One example (a notification service with BullMQ), one engineer’s experience, one anecdotal pattern. The framework is sensible, but it has not been validated at organizational scale. We do not know how it performs across teams with varying experience levels, how it handles time pressure when the fifth gate feels like overhead, or whether developers abandon the discipline after the first week.

The false dichotomy is also worth noting. The article presents two options: immediate code generation or five-level gated design. Experienced practitioners achieve similar design alignment through better prompt engineering, through structured project files, through architectural constraints baked into the codebase. The five levels are not the only path to design-first collaboration.

And the METR study adds an uncomfortable footnote. Developers using AI tools perceived themselves as 20% faster while measuring 19% slower on actual task completion. If the framework adds process steps to each interaction, the perceived overhead may be lower than the actual overhead. Or the design conversations may genuinely reduce rework. We do not have the data to know.

What Matters Here

Forty-one percent of all new code is now AI-generated. Every line carries design decisions that were never discussed.

The contribution of Garg’s framework is not the five levels themselves. It is the principle they encode: design decisions should be visible, reviewable, and approved before they become code. That principle holds whether you implement it as a five-level gated process, a single prompt constraint, an agentic pipeline with architectural separation, or governance infrastructure around your spec-driven workflow.

The cheapest time to govern a design decision is before it becomes code. After that, you are paying Boehm’s tax. With AI generating code at machine speed, the window for “before” is smaller than it has ever been.

A framework that forces that window open is worth more than it costs. Even when the framework is imperfect.

This analysis synthesizes Design-First Collaboration (March 2026), Knowledge Priming (March 2026), Context Anchoring (March 2026), and the Patterns for Reducing Friction in AI-Assisted Development series (March 2026) from martinfowler.com.

Victorino Group helps organizations build governance infrastructure for AI-assisted development, from design protocols to production monitoring. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation