- Home

- The Thinking Wire

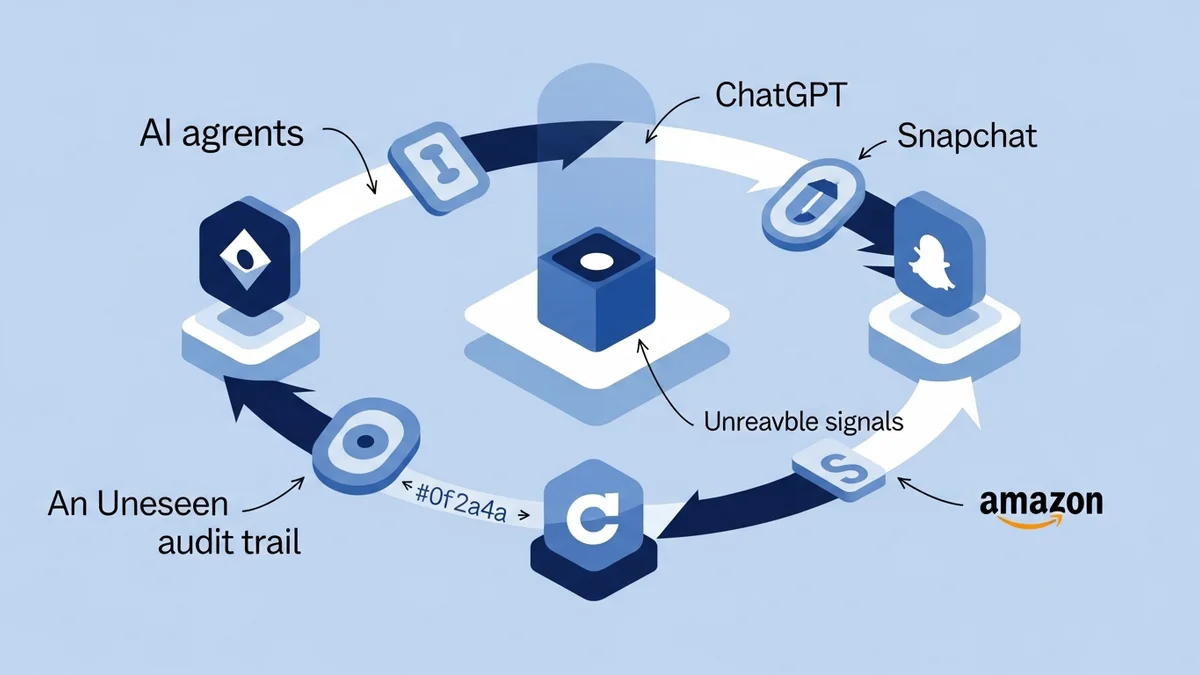

- The Attribution Loop You Can't Audit

In April 2026, three different companies shipped production AI advertising infrastructure that no external party can audit. ChatGPT began streaming sponsored ad units inside conversational answers, with attribution carried by four Fernet-encrypted tokens whose key material lives only on OpenAI servers. Snapchat launched Sponsored AI Snaps — brands’ AI agents transacting directly with users inside the Chat tab, the same surface that carried 950 billion messages last quarter. Amazon’s COSMO recommendation system was documented in detail, including the part most marketing teams will read past: 65% of its raw LLM-generated reasoning had to be thrown away to clear an internal quality bar.

These are not three stories. They are one story told from three angles. The auditability gap inside AI ad infrastructure is no longer a forecast. It is the current production state, and CMOs are inheriting engineering-grade accountability for systems with no engineering-grade observability.

We have written before about machine-readability as a marketing KPI, about brand safety on the agent-readable surface, and about AEO as the new optimization target. This essay is the next floor. Once an agent transacts on behalf of your brand inside someone else’s product, the question is not whether you can be discovered. The question is whether you can prove what just happened.

The Attribution Loop Inside ChatGPT

The Buchodi reverse-engineering of ChatGPT’s ad delivery is the cleanest description in public of what the loop actually contains. A Server-Sent Events stream injects a single_advertiser_ad_unit JSON object into the model’s response. A separate SDK called OAIQ runs on the merchant’s site and reads an ?oppref= URL parameter into a 720-hour first-party cookie named __oppref. Every measure call POSTs to bzr.openai.com/v1/sdk/events. Four Fernet-encrypted tokens orchestrate the handshake: ads_spam_integrity_payload for server-side integrity, oppref for forward attribution, olref for outbound link tracking, ad_data_token as a base64-and-Fernet-wrapped attribution envelope.

A single test account got six different advertisers across six conversations on six different topics. Beijing travel routed to Grubhub. NBA queries routed to Gametime. The Fernet header reveals mint timestamps; one click logged 95 seconds of latency between mint and use.

Read the loop carefully and one fact becomes inescapable: every signal the brand’s marketing team would need to verify spend, audience, brand-safety context, or even ad position is encrypted with a key the brand will never hold. The brand can see what OpenAI tells it. There is no third-party measurement layer. There is no documented user consent surface. There is no regulator reading the same envelope. The product shipped before the audit standard exists, which means the audit standard, when it arrives, will be retrofitted onto a system whose integrity model was never designed to be externally inspected.

This is the inversion that matters. In every previous advertising channel — TV, radio, display, social — measurement scaffolding shipped alongside the ad inventory. Imperfect, gameable, but external. The current generation ships with measurement scaffolding owned end-to-end by the platform. CMOs are being asked to sign budgets against an attribution model whose source of truth they cannot read.

Snapchat: Brand Agents Inside the Conversation

Snapchat’s Sponsored AI Snaps move the surface again. The previous frontier was paid placement around conversation. The current frontier is paid participation inside it. Brands now field their own AI agents in the Chat tab. A user can ask a brand’s agent a question and get a product recommendation back in the same thread where they message friends.

The performance numbers, by Snap’s own report, are the part that should keep marketing operations leaders awake: 22% more conversions and 20% lower cost-per-action versus baseline Sponsored Snaps. 85% of Snap users engage Chat regularly. Q1 2026 carried 950 billion chats. Snap’s general AI chatbot, launched in 2023, has been messaged by more than 500 million users.

Read the format honestly. A brand’s agent is now a participant in a private conversation. Every output that agent produces is an editorial act, made at the speed and volume of a server fleet, with brand-impact equal to anything a human spokesperson could say in public. The agent’s prompt, its retrieval sources, its safety filters, and its update cadence are governance artifacts. None of them are reviewed by the same compliance pipeline that reviews a TV spot. Most marketing teams do not even have the workflow that would put them through it.

The audit gap here is not encrypted tokens. It is organizational. The agent is an editorial output channel, but the team that signs off on copy, the team that signs off on legal, and the team that signs off on prompts are usually three different teams that have never been in the same room.

Amazon COSMO: The Quality-Control Discipline Marketing Doesn’t Have

The third angle is the one that exposes how immature most teams’ relationship with their own AI output is. Amazon’s COSMO is a knowledge graph that uses LLMs to generate commonsense connections behind product recommendations — for instance, that a pregnant searcher looking for shoes probably wants slip-resistant ones. The recommendation gain in production A/B is 0.7% sales lift and an 8% bump in navigation engagement, on roughly 10% of US traffic. By Amazon’s standard, that is a real win.

What gets less attention is the cost structure behind the win. Of the LLM’s raw output, 35% of search-buy explanations and 9% of co-purchase explanations clear Amazon’s quality bar. The rest — the majority — gets discarded. Amazon does not trust the model. It runs the output through a rule-based filter, then a vector-similarity dedup pass, then 30,000 hand-annotated examples across five yes-no questions, then a fine-tuned DeBERTa classifier with a 0.5 plausibility threshold. Only what survives reaches the index. The 30,000 annotations seeded a graph of 6.3 million nodes and 29 million edges — a leverage ratio of roughly 1,000 to 1 between human supervision and machine output.

There is one more detail worth pausing on. Amazon used Meta’s OPT model, not GPT-4. Why? Because customer behavior data had to stay on-premises. Data privacy was an architecture constraint, not a policy attached after the fact. The model choice was downstream of the governance choice.

Now invert the lens onto a marketing organization shipping AI-generated copy, AI-generated audience insights, AI-generated agent prompts. Where is the rule-based filter? Where is the dedup pass? Where are the 30,000 annotated examples that train a brand-specific classifier on what your tone of voice actually is? Where is the threshold at which a piece of agent output is allowed to face a customer? For most teams, the honest answer is: nowhere. The model output reaches the surface unfiltered, and the only audit is the one a customer service ticket triggers retroactively.

What CMOs Are Actually Being Asked To Sign

Sit the three observations next to each other and the operating reality is clear. ChatGPT’s attribution loop is unauditable by design. Snapchat’s brand-agent format places editorial output inside a private conversation with no parallel governance pipeline. Amazon shows the discipline required to use LLM output in production, and that discipline is largely absent in marketing.

The question for any marketing leader running spend through these channels in the next two quarters is not whether to participate. The question is what evidence you will hold when something goes wrong, who will read that evidence, and whether the format you signed up for ever produced any.

Do This Now

Before the next campaign briefing, write down the answers to four questions. Each takes ninety minutes with the right people in the room.

Token integrity. For every AI-mediated ad surface you spend on this quarter, list the signals you receive at attribution time. Mark each as platform-attested, third-party verified, or encrypted-but-trusted. If the column for “third-party verified” is empty, you are spending against a single source of truth.

Conversational governance. If you have shipped or are about to ship an AI agent inside a third-party platform’s conversation surface (Snapchat, ChatGPT, Meta, others), list the prompts, the retrieval sources, the safety filters, and the update cadence. Compare that list to the editorial pipeline a TV spot or display creative goes through. The delta is your governance debt.

Output quality bar. For your own AI-generated marketing output — copy, prompts, audience targeting — write down the discard rate. If you do not have one, the rate is zero, and you are shipping the model’s first draft. Pick a single output class and build a rule-based filter for it this month.

Privacy as architecture. Amazon picked OPT over GPT-4 because of where customer data could legally sit. Your equivalent question is: where is your customer behavior data when AI generates output against it? If the answer puts a regulator in the room, the architecture choice is upstream of every agent decision.

The marketing teams that survive the next two years of AI ad infrastructure are not the ones with the most agents in the most channels. They are the ones whose agents leave evidence the team can read, whose conversations leave a transcript the team can audit, and whose model output passes a discard threshold the team can defend. The audit gap is not closing on its own. The platforms have shipped. The standard has not.

This analysis synthesizes How ChatGPT Serves Ads — Here’s the Full Attribution Loop (Buchodi, April 2026), Snapchat Brings AI-Powered Conversational Advertising to Its App (TechCrunch, April 2026), and How Amazon Uses LLMs to Recommend Products (ByteByteGo, April 2026).

Victorino Group helps marketing and engineering leaders design audit and verification infrastructure for AI agents that transact on behalf of brands. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation