- Home

- The Thinking Wire

- AI Value Is Leaving the Model Layer. Here Are the Three Places It's Going.

AI Value Is Leaving the Model Layer. Here Are the Three Places It's Going.

In the first week of May 2026, three different analysts published pieces that have nothing in common at first glance. Forrester’s Charles Betz wrote about Atlassian and ServiceNow doubling down on context graphs. CMSWire’s Pierre DeBois asked whether the return of Google Data Studio signals the end of analytics silos. Revanth Krishna, on UX Collective, argued that bolt-on AI is structurally broken because it forces users to learn the system’s vocabulary instead of the other way around.

Different magazines. Different audiences. Different vocabularies.

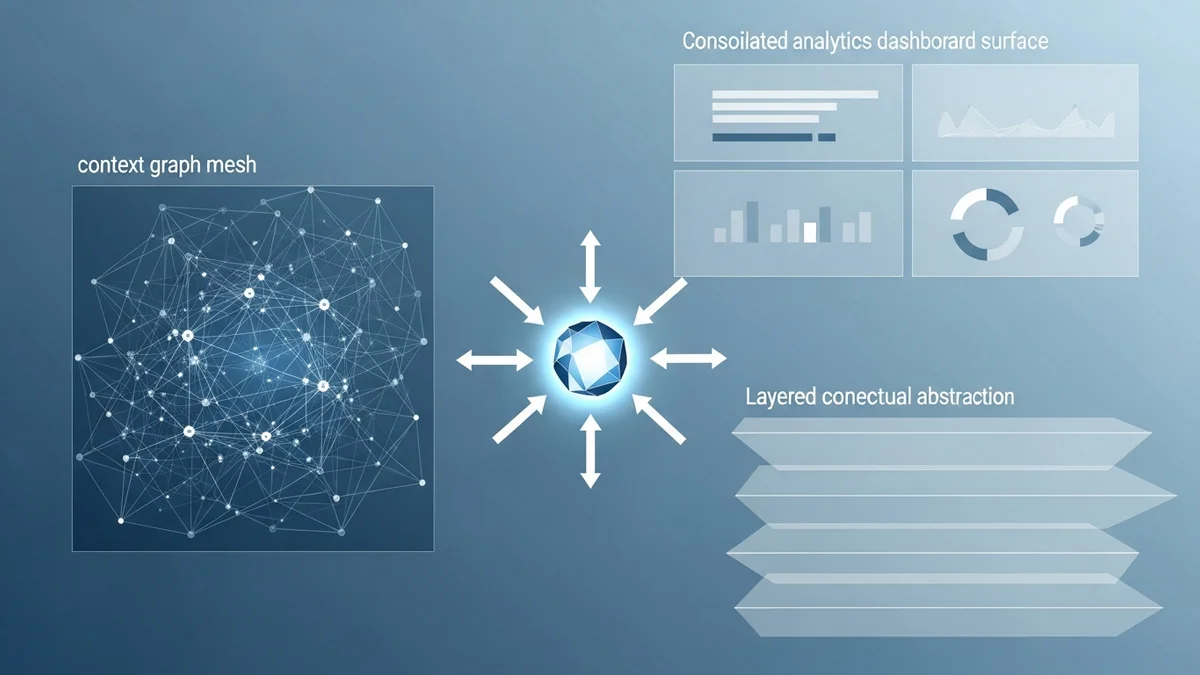

One thesis: AI value has stopped accumulating at the model layer. It is migrating, and it is migrating in three specific directions at once.

If you run a platform business, this is the week to read all three pieces and ask where your moat actually lives now. Model performance is becoming table stakes. The next two years of competitive advantage belong to whoever owns the operational graph beneath the model, the consolidated analytics surface above it, and the conceptual abstraction the user actually touches. Three vectors. Three different defensible positions. Few companies are positioned for more than one.

Vector One: The Operational Graph

Forrester’s piece on Atlassian and ServiceNow makes one claim plainly. The two dominant AI-enabled IT management platforms have converged on the same strategy, and that strategy is not “better model.” It is “richer graph.”

ServiceNow announced the Context Engine on April 9, 2026. The Context Engine unifies four graphs that used to live in separate products: the Service Graph (the CMDB), the Knowledge Graph (documentation, tickets, runbooks), the Cyber asset graph that came in with the Armis acquisition, and the Access graph that came in with Veza. ServiceNow says the engine learns from “85 billion workflows and 7 trillion transactions annually.” That number is not a marketing flourish. It is the moat.

Atlassian’s Teamwork Graph crossed 100 billion objects and connections during the same window, and its December 2025 acquisition of Secoda added semantic cataloging on top of the existing graph. Same direction. Different starting point.

What both companies are saying, without quite saying it, is that an LLM with a generic web index is a parlor trick in an enterprise context. The work that matters happens against a specific corpus of services, tickets, runbooks, identities, and dependencies. Whoever owns the graph of how those things actually relate is positioned to make the LLM useful. Whoever does not is positioned to be a feature.

We made a related observation about Cloud Next, where Google’s pitch for knowledge graphs as agent context tracked the same logic from the cloud platform side. The platforms that already have the operational graph, by accident or by design, are not racing to build models. They are racing to expose their graph to whatever model the customer prefers.

The defensibility test for vector one: if you replaced your current LLM provider with a competitor tomorrow, would the operational graph beneath it still be yours? If yes, the moat is real. If no, the moat is somebody else’s.

Vector Two: The Consolidated Analytics Surface

CMSWire’s piece on the Google Data Studio rebrand reads, on the surface, like a marketing analyst noting a product rename. Read again. Pierre DeBois is describing platform consolidation as a governance strategy.

In April 2026, Google rebranded Looker Studio back to Data Studio and shipped a Pro tier. The mechanics matter more than the name. Data Studio now runs native BigQuery conversational agents inside the dashboard. Colab notebooks live alongside reports. Marketing, sales, and service data share semantic definitions, which means an AI agent asking “how is the campaign performing” gets the same answer regardless of which surface it asks. Data Studio Pro adds AI workflow automation, multi-cloud orchestration, and real-time autonomous response to anomalies.

DeBois reads the consolidation as the end of analytics silos. The deeper read is that the analytics layer is becoming the governance surface for AI. When agents need to act on numbers, they need consistent numbers. When humans need to override an agent’s interpretation, they need a single dashboard where the override propagates. When auditors ask why an agent made a decision, they need a single explanation surface, not seven.

We argued a similar point about the semantic layer in Looker at Cloud Next: consistent semantics across surfaces is what lets agents and humans reason about the same numbers without hand-translation. CMSWire’s read takes the argument one step further. The consolidation is not just a quality-of-life feature for analysts. It is the precondition for letting agents act with measurable consequences.

The defensibility test for vector two: if your business runs three analytics tools today, with three definitions of “active customer” and three reconciliation pipelines, agents cannot operate on top of that mess in any auditable way. The companies who consolidated before agents arrived bought themselves a governance surface. The companies who did not are about to discover that the agent’s first failure mode is “the numbers disagreed.”

Vector Three: The Conceptual Abstraction

Revanth Krishna’s UX Collective piece is the one that names the third vector explicitly. His argument: bolt-on AI fails because it does not change the conceptual model the user has to learn. It just adds a chat box to the same screen.

His example is sharp. “AWS assistant today speaks AWS’s language,” he writes. The user has to know what an S3 bucket is, what a region is, what a versioning policy is, before they can ask a useful question. The AI-native version, in his framing, “asks two plain-language clarifying questions and returns a simple storage URL, no AWS vocabulary required.” Same underlying infrastructure. Different conceptual contract with the user.

This is where most enterprise AI work is failing right now. Teams are wiring chat interfaces into existing products and discovering that adoption stalls at curiosity. The reason is structural. The chat interface speaks the product’s old vocabulary. The user still has to translate their goal into that vocabulary. The friction the chat box was supposed to remove is still there, just behind one more click.

The conceptual layer is the unaddressed governance surface. It is also the place where most product teams are not yet thinking about defensibility. We argued in an earlier piece on evaluation-driven agent operations that the operational layer needs to be redesigned for agent traffic. The conceptual layer is the same observation made one floor higher. The interface needs to be redesigned for users who no longer have to know how the product is built.

The defensibility test for vector three: pull up your product’s primary AI surface. Ask a non-expert what they would say to it to get something useful done. If their first sentence requires them to know your product’s vocabulary, the conceptual moat is somebody else’s to build.

What This Means for Platform Strategy

Three vectors. Three different defensible positions. They are not interchangeable.

The operational graph is a long game. It compounds with every transaction the platform processes, and it is hard to copy because it is built into how the customer already operates. Atlassian and ServiceNow have it because they have run the workflows for years. A new entrant can build a better model in six months. They cannot build a 100 billion-object graph in six months.

The consolidated analytics surface is a forcing function. Companies who run AI agents on top of fragmented analytics are going to spend the next two years explaining why the agent’s answer disagrees with the dashboard. Companies who consolidated first will spend those two years shipping. The window to consolidate before agents arrive in production is closing.

The conceptual abstraction is the hardest of the three because it requires throwing away the vocabulary the product was built around. Most product teams cannot do this without a leadership mandate, because the existing vocabulary is encoded in the documentation, the support team’s training, the API surface, and the muscle memory of every customer. The teams who pull it off will look obvious in hindsight. The teams who do not will be remembered as the AWS-vocabulary version of whatever category they used to lead.

The thesis is not that the model layer no longer matters. It is that the model layer no longer differentiates. Three different analysts, looking from three different vantage points, arrived at the same conclusion in the same week. The companies that figure out which of the three vectors they are positioned to own, and invest accordingly, will compound. The companies still betting on a better model will discover, sometime in 2027, that the model is good enough for everybody and the moat was elsewhere all along.

This analysis synthesizes Atlassian and ServiceNow Lean into Context Graphs (Forrester, May 2026), Does the Return of Google Data Studio Signal the End of Analytics Silos? (CMSWire, May 2026), and Don’t Simply Bolt On AI. Rethink From the Ground Up. (UX Collective, May 2026).

Victorino Group helps platform leaders identify which moats outlast model commoditization. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation