- Home

- The Thinking Wire

- The Vendor Bias in AI Productivity: Why Your IDE Metric Is a Marketing Asset, Not an Audit Trail

The Vendor Bias in AI Productivity: Why Your IDE Metric Is a Marketing Asset, Not an Audit Trail

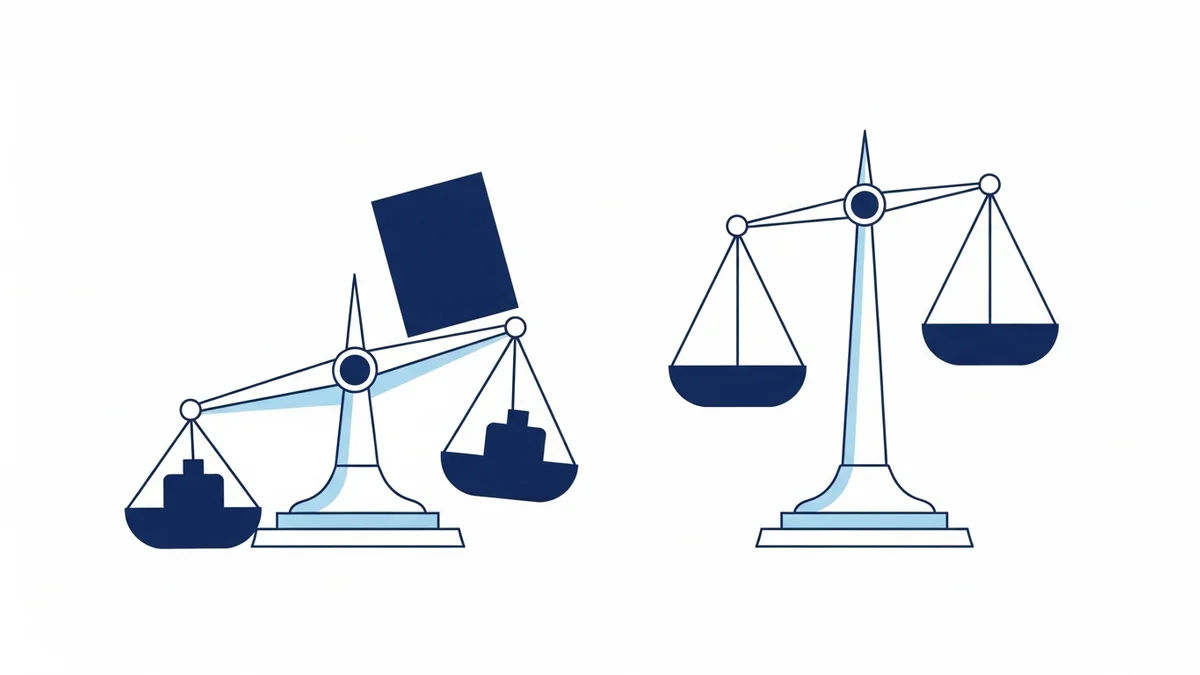

William O’Connell ran the same coding task through two AI IDEs back to back. Windsurf told him the work was 98% AI-generated. Cursor told him it was 52.6% AI-generated. Same task. Same developer. Same output. A 45-point spread depending on which vendor was holding the ruler.

That number is the entire problem.

When boards ask “what percentage of our code is AI-generated,” they assume the answer comes from a measurement. It does not. It comes from an instrument calibrated by the company that profits when the answer is higher. Every IDE vendor selling AI assistance has a commercial incentive to inflate AI attribution — and the methodologies they use, documented in O’Connell’s 22-minute deep dive, do exactly that (Your AI Might Be Lying to Your Boss, April 2026).

This is not a measurement problem. It is a fiduciary problem dressed up as a dashboard.

The Methodologies Are Built to Inflate

O’Connell’s analysis traces the mechanics. Windsurf’s attribution counts pasted text and auto-completed symbols as AI-generated. Cursor uses a more conservative line-based tracker that struggles with scattered edits. Neither vendor publishes the methodology in enough detail to reproduce. Neither submits to third-party audit. Both ship the number to your CTO’s dashboard.

The asymmetry compounds. If you are a developer who refactors three lines across nine files after a long pasted snippet, Windsurf will count the snippet as AI and shrug at the refactor. Cursor will count the refactor as human and shrug at the snippet. Whichever tool your team standardized on becomes the source of truth — and the source of truth was selected for reasons that had nothing to do with measurement validity.

This is the structural problem we did not name in The AI Verification Debt. That essay argued the verification gap is organizational. This one goes a layer deeper: even before verification, the underlying attribution data is contaminated at the vendor layer. You cannot govern what you cannot measure honestly.

The Numbers Executives Are Quoting Are Not Measurements

Industry executives have spent the last twelve months publishing AI code-generation rates as public KPIs. 30% at this company. 50% at that one. 75% at the most aggressive announcements. These numbers travel from vendor dashboards into earnings calls, board decks, and headcount decisions.

None of them survive a single audit question. Which vendor’s methodology produced the number? What is excluded? Were pasted blocks counted? Auto-completed symbols? Generated test scaffolding versus generated business logic? When the executive cannot answer, the number is not a metric. It is a marketing asset that escaped the marketing department.

The legal consequence is concrete. AI-generated code, under current US Copyright Office guidance, lacks copyright protection. An organization claiming 75% AI generation in public filings is also publicly claiming that 75% of its codebase is unprotected work. Most boards announcing these numbers have not consulted with their general counsel about the implication. They are optimizing for an analyst-call talking point and accepting an IP exposure they have not priced.

Building on The AI Adoption Spectrum, the deeper issue is that vendor metrics flatten a distribution into a single performative number. There is no spectrum visible in “we are 50% AI.” There is no signal about which 50%, by whom, with what review pattern. The metric was never designed to support governance. It was designed to support sales.

The Unmeasured Cost That Eats the Headline

Every productivity number from a vendor IDE measures generation. None of them measure what comes after generation: the review time, the debugging cycles, the regression tests, the rollback work, the production failures traced back to almost-right code that nobody read carefully enough.

The Mess Is The Work, as TLDR Founders recently framed it (The Mess Is The Work, April 2026) — the unglamorous coordination, judgment, and verification labor that turns generated output into shipped software. The Simulacrum of Knowledge Work essay makes the same point about white-collar AI broadly: a generated artifact that looks like work is not the work (Simulacrum of Knowledge Work, April 2026). The work is the consequence the artifact has on the system.

This is why the 98% figure is not just inaccurate. It is structurally misleading. Even if Windsurf’s number were honest about generation, it would be silent about consequence. The fraction of code that came from AI tells you nothing about the fraction of engineering effort, review burden, or production risk that came from AI. Those numbers are larger, harder to game, and exactly the ones nobody is reporting.

What Discord Did When They Stopped Trusting the Dashboard

The counter-evidence comes from inside an engineering team that decided its own measurement system was lying to it. Discord’s experimentation team found that running ~50 default metrics on every test produced so much noise that real signal was getting buried. They cut the default metric set to 15. The result: a 45% improvement in their ability to detect real effects (Measure Less to Learn More, April 2026).

The lesson is not “fewer metrics.” The lesson is that metric volume can actively destroy your ability to see what matters. When you instrument everything, the noise floor rises, the multiple-comparisons problem multiplies, and the dashboard tells you something is happening when nothing is — or that nothing is happening when something is. Discord’s team treated their own measurement infrastructure as a thing that could be wrong, audited it, and rebuilt it.

That is the move boards have not yet made on AI productivity. They are still treating the vendor dashboard as ground truth. Discord’s data says the opposite is the path: assume the instrument is biased, reduce the surface area, and design measurement that survives an audit you do not control.

What Survives an Audit

A measurement system that survives audit is one a board could defend in a deposition. It cannot rely on a single vendor’s proprietary methodology. It cannot count outputs nobody reviewed. It cannot omit the cost of getting that output to production. And it cannot be calibrated by a party that profits from the result.

For organizations that intend to publish AI productivity numbers — internally or externally — the implication is uncomfortable. The instruments shipped by your IDE vendor are not adequate. They were built to drive their own adoption, not your governance. Building a measurement system that survives audit means measuring at the commit and PR layer, not the keystroke layer; counting reviewer time, not generation events; tracking production incidents traceable to AI-assisted contributions; and treating any vendor-supplied number as a directional signal at best, never a citable metric.

This is unglamorous work. It looks nothing like the dashboard. It is also the only version of “AI productivity” that does not collapse the moment a regulator, a litigator, or a serious analyst asks how the number was produced.

The 98% versus 52.6% gap is the canary. It tells you the instrument is broken at the vendor layer. The question is whether your organization will keep citing the broken number, or build the one a board can actually defend.

This analysis synthesizes William O’Connell’s Your AI Might Be Lying to Your Boss (April 2026), Discord Engineering’s Measure Less to Learn More (April 2026), Simulacrum of Knowledge Work (April 2026), and TLDR Founders’ The Mess Is The Work (April 2026).

Victorino Group helps boards and CTOs build AI productivity measurement that survives audit. Let’s talk.

All articles on The Thinking Wire are written with the assistance of Anthropic's Opus LLM. Each piece goes through multi-agent research to verify facts and surface contradictions, followed by human review and approval before publication. If you find any inaccurate information or wish to contact our editorial team, please reach out at editorial@victorinollc.com . About The Thinking Wire →

If this resonates, let's talk

We help companies implement AI without losing control.

Schedule a Conversation